mvidner

Improve yast devtools

an invention by jreidinger

There is now bunch of yast devtools but the most of them are obsolete or useful only for ycp developement, which is now dead. It is also mixture of tools to build package, develop single package and new yast meta for doing changes on all modules developed by yast team. So goal is

Kill YCP Zombies by Compiling Ruby to Ruby

Kill YCP Zombies by Compiling Ruby to Ruby

a project by mvidner

During the YCP Killer project, Y2R didn't translate most YCP operators and builtins into equivalent Ruby constructs but into library calls. This was necessary to preserve behavior in various edge-case situations, mostly when nil was passed around. The resulting code is often long and hard to work with.

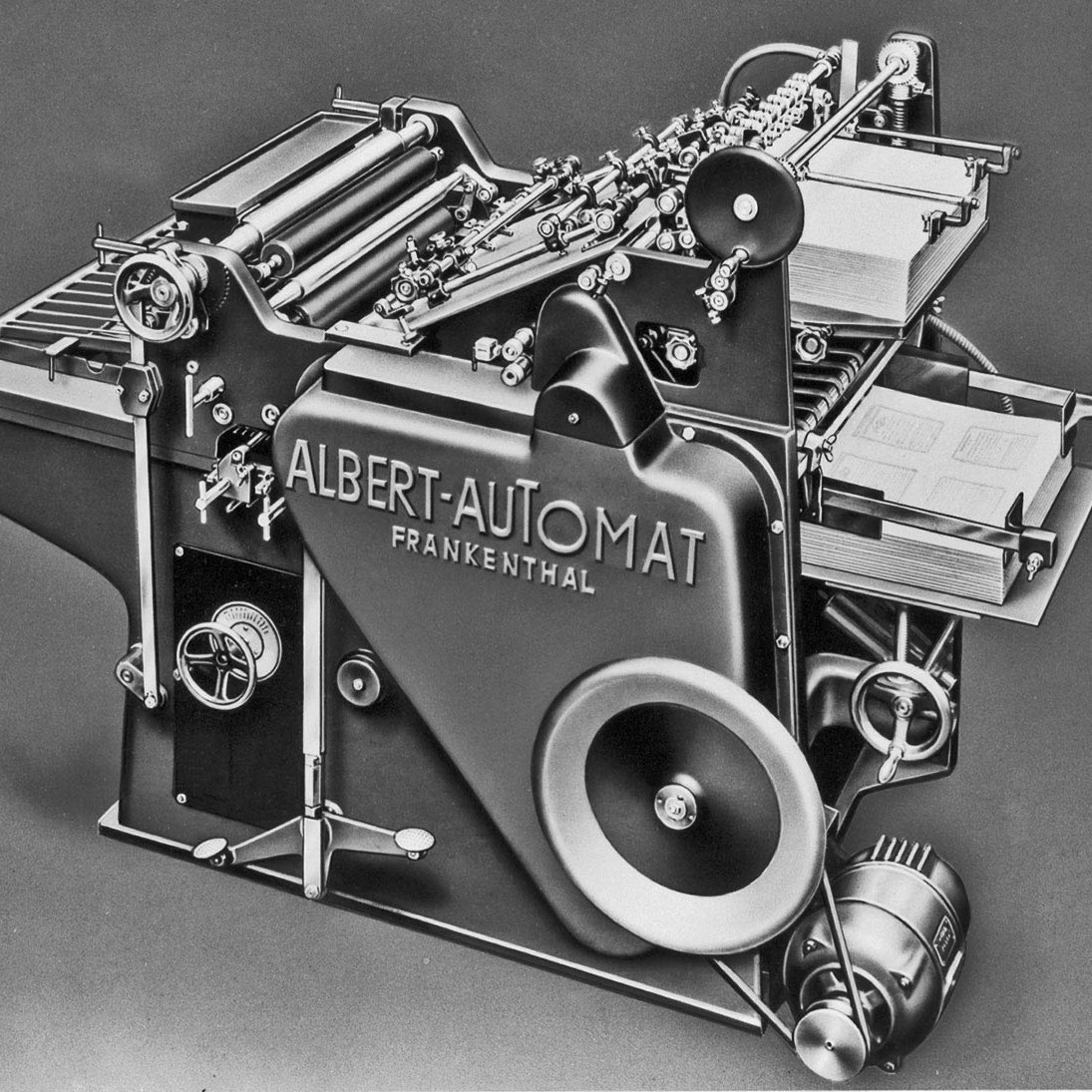

Faster Raspberry Pi Builds for SUSE Studio

an invention by bkutil

Intro

In order to be able to throw pies faster and distribute them even to remote SUSE colonies, we need to build an advanced antimatter-fueled pie hyper-accelerator.

Make ruby-ui usable for YaST

an idea by dmacvicar

ruby-ui was a hackweek project with jreidinger to make libyui (YaST text/graphical engine) usable from pure-ruby without going through YCP.

A SUSE chronicle 0.1

a project by rhaidl

Talking to people, getting the information about what had happened in the SUSE history, bringing all together to kind of a chronicle. Let's give it a try :-)

Twopence

an invention by e_bischoff

Twopence is (will be) a remote execution engine for tests, able to run tests in virtual machines and real hardware through various means of communication : virtio for KVM / QEmu, ssh on top of libssh, serial lines. This library can be called from shell and ruby wrappers.

Resistance is Futile - Using zypper to "upgrade" CentOS/RHEL to openSUSE/SLES

a project by RBrownSUSE

zypper is magic

SUSE Bug Query Engine

a project by LPechacek

In short, give second breath to http://hall.suse.de/bugs/defects.cgi.

bug screening helper

bug screening helper

a project by bmwiedemann

The Problem: many bugs filed for openSUSE go to the screening-team by default and often remain there for weeks, so that developers (who would be interested in analyzing or fixing these bugs) do not learn about them. However, the screening process is a hard one

List of open github pull request in a card on a team trello board

a project by vlewin

Write a simple command line tool for getting the open pull request from github and put it into a trello card. The tool should periodically update a list of pull request. In addition it would be great to have a connection between the trello card and github pull request.

Experiment with uselessd as a systemd replacement on openSUSE 13.1

an invention by dsterba

The base version for uselessd is systemd-208, which is the version used in 13.1. Let's try if a direct substitution of the binaries works and watch out for the problems.

Video presence system for distributed teams

a project by ancorgs

Those working remotely or managing a distributed team know it: face time is invaluable. The former openSUSE team has been using http://sqwiggle.com to keep in touch and Google hangout to hold a stand up meeting every morning.

openSUSE 13.2 ARM hackathon

a project by algraf

openSUSE 13.2 is taking shape on ARM, but we need to make sure we smoothen its edges to make an actual release out of it. The goal of this project is to make sure all devices we should run on actually work and that the last few packages necessary for productive use of ARM devices work properly on 13.2.

Moses machine translation performance tuning

a project by marxin

Moses is a statistical machine translation system that allows you to automatically train translation models for any language pair. Intention of the project is to tune up existing software, where a glimpse shows that majority of time is consumed by memory allocation, dynamic casting and other calculation non-related stuff. I would like to inspect many techniques (like perf profiling, GCC LTO, GCC profile-guided optimization, code refactoring, OpenTuner, etc.) which may bring really significant performance gain. Moreover, it would be really beneficial to come up with a cookbook that can be used by folk in general. If possible, I would like to create a step-by-step performance improvement graphs.

Make sure bicho works with current bugzilla

Make sure bicho works with current bugzilla

an idea by dmacvicar

Bicho is a ruby gem to query bugzilla. I have received some reports that it is not working with current bugzilla. May be you want to learn ruby and fix it.

Travis CI support for Yast

an invention by lslezak

Description

Bookworm, the educational tool

a project by kwwii

Create a system to allow a community to add contextual information to "open books". Think wikipedia for books

try to understand cups > 1.5

a project by mhocko

Starting with CUPS 1.6 things have changed considerably. Clients are no longer discovering broadcasted printers anymore. Distributions (e.g. Debian) has backported the original protocol into cups-daemon package but this doesn't seem to work either on my laptop. I would like to look and try to understand what the hack is going on here.

Find Socket and Pipe Partners

a project by eeich

For debugging purposes one often times needs to know the communication partner on a socket or pipe a program has open. This information is not

Learn and help learn

a project by kstreitova

I'm in SUSE for about a month and as a fresh graduate I had to learn a lot of stuff during this period. And there is a bunch of other things I will have to learn of course. Therefore I would like to use Hackweek to deepen my knowledge of various tools, processes, techniques or other packagers related stuff. However it would be quite a pity to hold the acquired information just to myself. So I would like to keep the result of my learning for further usage either by enhancing the Innerweb wiki, the public openSUSE wiki or by creating new wiki for packagers' purposes.

A platform a day keeps the doctor away

a project by insilmaril

Finish Qt 5 port of vym and port to as many operating systems as possible.

yast2-fonts

an invention by pgajdos

- czech translation

- [ ] turn antialiasing off -> [x] font antialiasing

Get started with QT

a project by moskyto

Learn QT and make something to try it.

Investigate ruby apis for jenkins and libvirt

an idea by vmoravec

And consider making use of them in QA infrastructure

one-click distribution from web page

an idea by mhocko

Maybe this is something we already know but I haven't found it. But found it really cool how Debian can be installed easily from Windows machines. Just have a look at http://goodbye-microsoft.com/

Automate to save time for hacking

Automate to save time for hacking

a project by locilka

Yast team has a great experience in automating tasks that can be done by machines in order to save time that can be used better. We usually use Jenkins for running these jobs.

Continue development of generic job server in haskell with primary focus on continuous integration

a project by yac

Continue development of generic job server in haskell with primary focus on continuous integration and later possibly as support tool for data analysis in semantic file storage server, software configuration engine, etc

Tool to update images in an OpenStack Cloud

an idea by tbechtold

Currently there is an internal OpenStack instance (cloud.suse.de). Most of the images there are outdated so it's common that everybody just uploads a new image. Would be nice to have a tool which updates at least the most common images (SLE11&12, openSUSE, CentOS, Ubuntu, Debian, Fedora) automatically once a day. So ater spawning a new VM, there would be no need to first update (and maybe reboot) the machine or upload a new image before you can start to work.

Google Hangouts killer: WebRTC-based video conferencing system

a project by ancorgs

We have some internal systems for videoconferencing like Big Blue Button or OpenMeetings. But in my experience none of them can compare to Google Hangouts, which is still the best free (as in free beer) alternative for videoconferencing with integrated screen sharing.

YaST module for smarmontools

an idea by sbrabec

smartmontools has a number of options that fine tune disk checking, periodic tests, short tests, values to monitor, values to ignore.

Explore Clojure with Project Euler

a project by bkutil

As a part of this hackweek, I'd like to take a look at Clojure and use it to solve as many problems as possible from the project euler.

Internal shared images repository

a project by ancorgs

During the last CSM workshop we identified the need to have a good way to share the images we use for testing. We have documented the requirements and the current status in this wiki page (we even have a diagram).

Jangouts development workshop

a project by ancorgs

We are right now testing a patch to Janus that will hopefully give us the stability we were missing in http://jangouts.suse.de. As a consequence, it's reasonable to expect a wider usage of Jangouts inside the company. Thus, I want to share maintainership of Jangouts as much as possible. The more developers know how to fix errors and implement features, the better.

Qt based chinese learning program

a project by mvetter

The Idea

Since some time I am interested in getting better at C++ and learn more about Qt framework. Since I learn best with having a project/goal I came up with this:

get a CNC Gcode generator to work on openSUSE

a project by bmwiedemann

My hobby project is about using Lego mindstorms to turn a lathe / turning machine into a CNC.

Learn Haskell by creating an interpreter

an idea by chnyda

The aim of the project is to create a stupid interpreter to evaluate arithmetic expressions and functions. I have been reading a lot about Haskell and creating a stupid interpreter is a nice way to get started.

Multimedia insane migration

a project by scarabeus_iv

Packman reduction

Exporting ansible experience to Salt

an idea by dgutu

Because of past experience with ansible as a tool to orchestrate the code deployment on multiple platforms consider important to get most from Salt as

Don't write tests! Generate them.

an invention by e_bischoff

The title of this project is inspired from the must-see video

Markdown extension for Jianpu (Numbered musical notation)

a project by scateu

As we know, we have ABC notation or GNU Lilypond for music staff. It takes ASCII as input and generates music scores and even MIDI format, which is very convenient for people to type music in computer.

Try to understand and use Lilypond format to generate musical scores

an idea by sndirsch

See title

x86 instructions decoder

x86 instructions decoder

a project by bpetkov

This is the tool I've been working on since HW11 and it needs more work. Actually, there's always something which could be done on it. It is basically an x86 instruction decoder with special emphasis on the kernel and decoding interesting pieces of it in order to help in the development of low-level patching techniques, among others.

Let’s Encrypt integration into openSUSE/SLE

Let’s Encrypt integration into openSUSE/SLE

a project by abergmann

Static analyzer of Lua language

a project by NalaGinrut

I'm trying to write a static analyzer for Lua programming language. And I've ready done some parts, say, lexer/parser/AST/types...etc.

Shipping everything

a project by cschum

Writing code is wonderful, but it gets its real value, when it's released and shipped to the world. You know the mantra: "Release early, release often".

Get rust into Tumbleweed

Get rust into Tumbleweed

a project by KGronlund

With rust 1.9 released, it should be possible to from now on bootstrap rustc from the previous version of rustc (so 1.10 can be built using 1.9 etc.). This means that it should now be possible to create a rustc package which no longer needs binary snapshots to build, meaning that we might even be able to submit rustc for inclusion in openSUSE Tumbleweed.

Study DBus

a project by cxiong

As DBus a main component in Linux user space, in this hackweek I plan to learn more about it.

YaST2 code reorganization

a project by ancorgs

YaST code organization is a mess at many levels (files location, namespaces, code dependencies...). Recently we created this gist to put some of the issues on the table

Simulate SD card in software

a project by algraf

To make OpenQA work with real ARM devices, we need to control

Learn about GNU Hyperbole, an Enhancement for Emacs

a project by keichwa

"GNU Hyperbole is an open, efficient, programmable information management and hypertext system for GNU Emacs." ()

Improve packagers' life

a project by kstreitova

Every packager encounters boring manual tasks every once in a while and these tasks can most probably be automated to some extent. During Hackweek I aim to try and identify such cases in various packagers' workflow and consider creating a tool that would make these tasks easier. Also, I would like to find out whether there is a demand for such tool. In that case, this Hackweek project will turn into a long-term task I plan to keep working on.

flatpak (previously xdg-app) runtime based on openSUSE / flatpak support for OBS

a project by fcrozat

Flatpak (previously known as xdg-app) is a bundle system, based on ostree, to easily make available applications bundle to users. Currently, flatpack is available on openSUSE Tumbleweed but we don't ship any runtime based on openSUSE (freedesktop or GNOME runtime).

Task manager in Elixir/Erlang

a project by vmoravec

Elixir is a Ruby-ish dialect of Erlang with meta-programming capabilities, this is my first project using it: pedro . The idea is to create a task manager that would organize tasks (jobs) and manage them in projects. It will be running locally, remotely or both in multi-node setup, will provide CLI, have web UI relying on http and websockets.

AuthStralia — (almost) stateless authorization ecosystem for a web age

an invention by kpimenov

AngularJS, Websockets, REST APIs for mobile apps, one-time links for emails — what’s the topmost complexity all those things share in common?

Local voice recognition for home automation

a project by jenspinney

There are several popular ways of controlling home automation with voice today. Amazon Echo and Google Home both allow users to control lights, speakers, etc. with a simple voice command.

[openSUSE] speed up distro rebuild time by analyzing rebuild graph

a project by lnussel

The openSUSE build service could build hundreds of packages in parallel but in practice serial package dependencies prevent that.

Ideas about local community involvement

a project by vsvecova

The plan is to gather ideas about how SUSE can become a more integral part of the local tech community scene (in PRG, NUE, or other locations). As a person who has been involved in educating women about tech for some time, I am thinking of introductory workshops and meetups, aimed not necessarily only at female audience.

Reverse engineer memory layout

an invention by mkoutny

TL;DR Use convolution to find type candidates, then solve system of equations to refine the result.

YaST Integration Tests Using Cucumber

YaST Integration Tests Using Cucumber

a project by lslezak

Currently we use openQA for the the YaST integration tests. It runs YaST in a VM and controls it via emulating keyboard input. The result is checked by comparing the screenshots.

More ruby in YaST

a project by jreidinger

In general plan for YaST is to use ruby only in future. So goal of this project is to move it forward and replace more parts with ruby.

Parser to extract function names from openQA lib/ functions - improve perl skills

a project by jorauch

Since there is no real documentation about openQA's lib/ functions I wanted to kill two birds with one stone and write a parser in perl that extracts all function names (and maybe preceding comments) in said directory and improve my perl knowledge by doing this.

Do some 3D printing

an invention by aschnell

Do some 3D printing incluing designing the object.

The Team Dashboard Web Application

The Team Dashboard Web Application

an idea by lslezak

Why a Dashboard?

Build a minetest server inside SUSE network

Build a minetest server inside SUSE network

a project by whdu

An introduction from minetest website: " Minetest is a near-infinite-world block sandbox game and a game engine, inspired by InfiniMiner, Minecraft, and the like. Minetest is available natively for Windows, OS X, GNU/Linux, Android, and FreeBSD. It is Free/Libre and Open Source Software, released under the LGPL 2.1 or later. "

Another try on minimalistic C widget library

a project by metan

I've attempted this several times already and each attempt had different shortcomings. I'm kind of curious about how exactly will I fail this time.

Write SUSE engineering blog posts

a project by ptesarik

L3 bug reproduction often requires becoming the admin for a moment. I'd like to write down some nifty tricks I used to get certain “interesting” system configurations to work.

Research telemetry for (open)SUSE products

Research telemetry for (open)SUSE products

an idea by dmacvicar

Most of design is done still with a embarrassing amount of data. Having released software for decades, we still don't know exactly what module is the most used, what workflows the customers are following, where do customers fail. It is all guesses and opinions.

rpi home surveillance

an idea by mvetter

Wanted to build a basic home surveillance with rpi and hedwig.

Learn Android development

an idea by mvetter

Over the years I have stumbled upon various Android projects where I needed a feature and wasn't able to implement it because I had no idea about Android development.

xdg-utils python rewrite

a project by simotek

The plan is to start working towards a rewrite of xdg-utils in python, focusing on the really bad bits such as dealing with desktop files and mime handling.

Advanced online payment app for desktop

an idea by MDoucha

There are mobile payment apps which allow you to pay via QR code. But I couldn't find any app that would work on desktop e.g. via special URI. So here's my idea:

Migration of Pology to Python3

an idea by vpelcak

Pology is a Python library and collection of command-line tools for in-depth processing of PO files, the translation file format of the GNU Gettext software translation system.

Bot to check new gems in the bundle for maintainability

Bot to check new gems in the bundle for maintainability

an idea by hennevogel

If I submit a PR on github || SR on OBS that introduces new gems into the bundle I want a bot to tell me about the maintainability of this gem.

Port MicroOS to the Gameshell from Clockwork Pi

Port MicroOS to the Gameshell from Clockwork Pi

a project by aplanas

The Gameshell is a small game console based on AllWinner R16 (Cortex-A7, IIRC the same CPU that the RPi2). Currently is supporting Debian, and some community member ported ArchLinux on it.

Rewrite transactional-update in C++

a project by fos

transactional-update, the application to update read-only systems such as openSUSE MicroOS and openSUSE Kubic and the Transactional Server installations of openSUSE Leap, openSUSE Tumbleweed and SUSE Linux Enterprise Server, evolved from a POC to a fully fledged solution - and is currently completely written in Bash. This has been working really well in the past, but is gradually reaching its limits, especially when thinking about supporting additional file systems or ports to other Linux distributions - yes, we have a huge interest in other distributions adopting our technology.

Learn Crystal by porting part of YaST to that language

an invention by ancorgs

For a very long time, I have been planning to play with Crystal as possible substitute/complement for Ruby. With that goal, I have isolated a very small subset of the Ruby project I know the best (yast-storage-ng) and I want to migrate that subset to Crystal to get a general feeling about the language. See the repository with the experiment already in progress.

Sharing logic between desktop and web based applications through WASM

an invention by IGonzalezSosa

Project Description

Bonus project: Chameleon paintings

Bonus project: Chameleon paintings

a project by kstreitova

This is an extra project for Hack Week evenings because there is never enough chameleons. Never.

Extend GObject based introspectable API to libzypp

an invention by zbenjamin

Agama Minimal Live Image

a project by jreidinger

Relm4-based user interface for Agama

an invention by IGonzalezSosa

Motivation

Port Agama's manager to Rust

a project by IGonzalezSosa

Initially, the Agama D-Bus service was written 100% in Ruby. For many things, it relies on YaST, so it makes sense to use the same language. It was great to have something working quickly, but it also had some drawbacks. The main problem is that, as YaST is not thread-safe, we separated the service into different processes (storage, software, localization, etc.). The system became most responsive but at the cost of eating a lot of RAM.

AI-Powered Unit Test Automation for Agama

a project by joseivanlopez

The Agama project is a multi-language Linux installer that leverages the distinct strengths of several key technologies:

Activity