Project Description

Dashboard to aggregate publicly available open source date and transform, analyse, forecast factors affecting water conflicts.

Full disclosure: This project was initially done as part of my University course - Data Systems Project. It was presented to TNO (Nederlandse Organisatie voor Toegepast Natuurwetenschappelijk Onderzoek) - Military division. Reason I took this project was it was exciting ML/AI POC for me.

Also believed this would actually help prevent conflicts and provide aid as oppose to somehow use it maliciously. This project is 2 years old. TNO did not provide any of their data or expertise and do not own this project.

Current state:

FE: React BE: Python / Flask

- Project is more than 1.5 years old.

- UI have quite alot of hardcoded data.

- There are some buggy UI issues as well.

- Backend could be broken

Goal for this Hackweek

github (Private): https://github.com/Shavindra/TNO

I like to keep things very simple and not overdo anything.

- Update packages

- Fix UI bugs.

- Update Python backend

Then work one of the following

- Integrate some data sources properly.

- Least 1/2 API endpoints working on a basic level.

- Any other suggestions?

Resources

https://www.wri.org/insights/we-predicted-where-violent-conflicts-will-occur-2020-water-often-factor

Looking for hackers with the skills:

ai machinelearning artificial-intelligence water conflicts dashboard reactjs react

This project is part of:

Hack Week 21

Activity

Comments

Be the first to comment!

Similar Projects

Local AI assistant with optional integrations and mobile companion by livdywan

Description

Setup a local AI assistant for research, brainstorming and proof reading. Look into SurfSense, Open WebUI and possibly alternatives. Explore integration with services like openQA. There should be no cloud dependencies. Mobile phone support or an additional companion app would be a bonus. The goal is not to develop everything from scratch.

User Story

- Allison Average wants a one-click local AI assistent on their openSUSE laptop.

- Ash Awesome wants AI on their phone without an expensive subscription.

Goals

- Evaluate a local SurfSense setup for day to day productivity

- Test opencode for vibe coding and tool calling

Timeline

Day 1

- Took a look at SurfSense and started setting up a local instance.

- Unfortunately the container setup did not work well. Tho this was a great opportunity to learn some new podman commands and refresh my memory on how to recover a corrupted btrfs filesystem.

Day 2

- Due to its sheer size and complexity SurfSense seems to have triggered btrfs fragmentation. Naturally this was not visible in any podman-related errors or in the journal. So this took up much of my second day.

Day 3

- Trying out opencode with Qwen3-Coder and Qwen2.5-Coder.

Day 4

- Context size is a thing, and models are not equally usable for vibe coding.

- Through arduous browsing for ollama models I did find some like

myaniu/qwen2.5-1m:7bwith 1m but even then it is not obvious if they are meant for tool calls.

Day 5

- Whilst trying to make opencode usable I discovered ramalama which worked instantly and very well.

Outcomes

surfsense

I could not easily set this up completely. Maybe in part due to my filesystem issues. Was expecting this to be less of an effort.

opencode

Installing opencode and ollama in my distrobox container along with the following configs worked for me.

When preparing a new project from scratch it is a good idea to start out with a template.

opencode.json

``` {

SUSE Observability MCP server by drutigliano

Description

The idea is to implement the SUSE Observability Model Context Protocol (MCP) Server as a specialized, middle-tier API designed to translate the complex, high-cardinality observability data from StackState (topology, metrics, and events) into highly structured, contextually rich, and LLM-ready snippets.

This MCP Server abstract the StackState APIs. Its primary function is to serve as a Tool/Function Calling target for AI agents. When an AI receives an alert or a user query (e.g., "What caused the outage?"), the AI calls an MCP Server endpoint. The server then fetches the relevant operational facts, summarizes them, normalizes technical identifiers (like URNs and raw metric names) into natural language concepts, and returns a concise JSON or YAML payload. This payload is then injected directly into the LLM's prompt, ensuring the final diagnosis or action is grounded in real-time, accurate SUSE Observability data, effectively minimizing hallucinations.

Goals

- Grounding AI Responses: Ensure that all AI diagnoses, root cause analyses, and action recommendations are strictly based on verifiable, real-time data retrieved from the SUSE Observability StackState platform.

- Simplifying Data Access: Abstract the complexity of StackState's native APIs (e.g., Time Travel, 4T Data Model) into simple, semantic functions that can be easily invoked by LLM tool-calling mechanisms.

- Data Normalization: Convert complex, technical identifiers (like component URNs, raw metric names, and proprietary health states) into standardized, natural language terms that an LLM can easily reason over.

- Enabling Automated Remediation: Define clear, action-oriented MCP endpoints (e.g., execute_runbook) that allow the AI agent to initiate automated operational workflows (e.g., restarts, scaling) after a diagnosis, closing the loop on observability.

Hackweek STEP

- Create a functional MCP endpoint exposing one (or more) tool(s) to answer queries like "What is the health of service X?") by fetching, normalizing, and returning live StackState data in an LLM-ready format.

Scope

- Implement read-only MCP server that can:

- Connect to a live SUSE Observability instance and authenticate (with API token)

- Use tools to fetch data for a specific component URN (e.g., current health state, metrics, possibly topology neighbors, ...).

- Normalize response fields (e.g., URN to "Service Name," health state DEVIATING to "Unhealthy", raw metrics).

- Return the data as a structured JSON payload compliant with the MCP specification.

Deliverables

- MCP Server v0.1 A running Golang MCP server with at least one tool.

- A README.md and a test script (e.g., curl commands or a simple notebook) showing how an AI agent would call the endpoint and the resulting JSON payload.

Outcome A functional and testable API endpoint that proves the core concept: translating complex StackState data into a simple, LLM-ready format. This provides the foundation for developing AI-driven diagnostics and automated remediation.

Resources

- https://www.honeycomb.io/blog/its-the-end-of-observability-as-we-know-it-and-i-feel-fine

- https://www.datadoghq.com/blog/datadog-remote-mcp-server

- https://modelcontextprotocol.io/specification/2025-06-18/index

- https://modelcontextprotocol.io/docs/develop/build-server

Basic implementation

- https://github.com/drutigliano19/suse-observability-mcp-server

Results

Successfully developed and delivered a fully functional SUSE Observability MCP Server that bridges language models with SUSE Observability's operational data. This project demonstrates how AI agents can perform intelligent troubleshooting and root cause analysis using structured access to real-time infrastructure data.

Example execution

SUSE Edge Image Builder MCP by eminguez

Description

Based on my other hackweek project, SUSE Edge Image Builder's Json Schema I would like to build also a MCP to be able to generate EIB config files the AI way.

Realistically I don't think I'll be able to have something consumable at the end of this hackweek but at least I would like to start exploring MCPs, the difference between an API and MCP, etc.

Goals

- Familiarize myself with MCPs

- Unrealistic: Have an MCP that can generate an EIB config file

Resources

Result

https://github.com/e-minguez/eib-mcp

I've extensively used antigravity and its agent mode to code this. This heavily uses https://hackweek.opensuse.org/25/projects/suse-edge-image-builder-json-schema for the MCP to be built.

I've ended up learning a lot of things about "prompting", json schemas in general, some golang, MCPs and AI in general :)

Example:

Generate an Edge Image Builder configuration for an ISO image based on slmicro-6.2.iso, targeting x86_64 architecture. The output name should be 'my-edge-image' and it should install to /dev/sda. It should deploy

a 3 nodes kubernetes cluster with nodes names "node1", "node2" and "node3" as:

* hostname: node1, IP: 1.1.1.1, role: initializer

* hostname: node2, IP: 1.1.1.2, role: agent

* hostname: node3, IP: 1.1.1.3, role: agent

The kubernetes version should be k3s 1.33.4-k3s1 and it should deploy a cert-manager helm chart (the latest one available according to https://cert-manager.io/docs/installation/helm/). It should create a user

called "suse" with password "suse" and set ntp to "foo.ntp.org". The VIP address for the API should be 1.2.3.4

Generates:

``` apiVersion: "1.0" image: arch: x86_64 baseImage: slmicro-6.2.iso imageType: iso outputImageName: my-edge-image kubernetes: helm: charts: - name: cert-manager repositoryName: jetstack

Enable more features in mcp-server-uyuni by j_renner

Description

I would like to contribute to mcp-server-uyuni, the MCP server for Uyuni / Multi-Linux Manager) exposing additional features as tools. There is lots of relevant features to be found throughout the API, for example:

- System operations and infos

- System groups

- Maintenance windows

- Ansible

- Reporting

- ...

At the end of the week I managed to enable basic system group operations:

- List all system groups visible to the user

- Create new system groups

- List systems assigned to a group

- Add and remove systems from groups

Goals

- Set up test environment locally with the MCP server and client + a recent MLM server [DONE]

- Identify features and use cases offering a benefit with limited effort required for enablement [DONE]

- Create a PR to the repo [DONE]

Resources

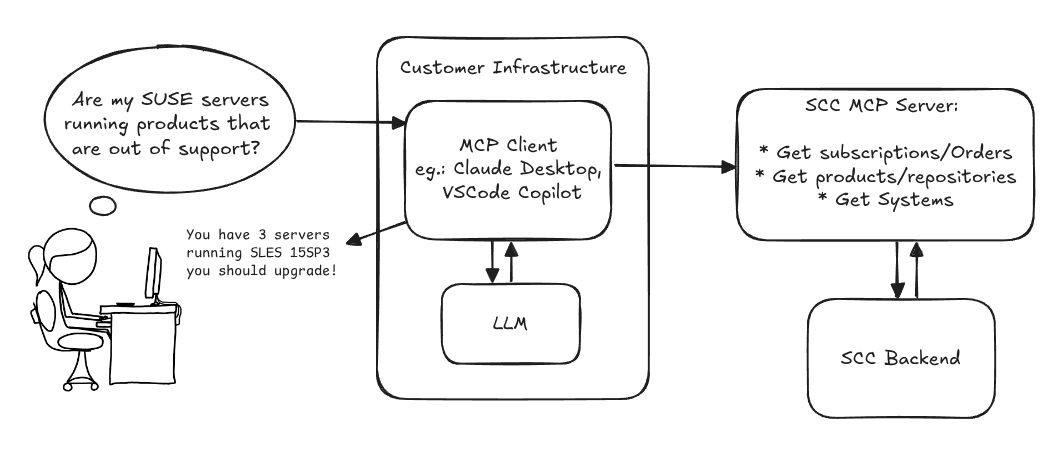

MCP Server for SCC by digitaltomm

Description

Provide an MCP Server implementation for customers to access data on scc.suse.com via MCP protocol. The core benefit of this MCP interface is that it has direct (read) access to customer data in SCC, so the AI agent gets enhanced knowledge about individual customer data, like subscriptions, orders and registered systems.

Architecture

Goals

We want to demonstrate a proof of concept to connect to the SCC MCP server with any AI agent, for example gemini-cli or codex. Enabling the user to ask questions regarding their SCC inventory.

For this Hackweek, we target that users get proper responses to these example questions:

- Which of my currently active systems are running products that are out of support?

- Do I have ready to use registration codes for SLES?

- What are the latest 5 released patches for SLES 15 SP6? Output as a list with release date, patch name, affected package names and fixed CVEs.

- Which versions of kernel-default are available on SLES 15 SP6?

Technical Notes

Similar to the organization APIs, this can expose to customers data about their subscriptions, orders, systems and products. Authentication should be done by organization credentials, similar to what needs to be provided to RMT/MLM. Customers can connect to the SCC MCP server from their own MCP-compatible client and Large Language Model (LLM), so no third party is involved.

Milestones

[x] Basic MCP API setup MCP endpoints [x] Products / Repositories [x] Subscriptions / Orders [x] Systems [x] Packages [x] Document usage with Gemini CLI, Codex

Resources

Gemini CLI setup:

~/.gemini/settings.json:

Song Search with CLAP by gcolangiuli

Description

Contrastive Language-Audio Pretraining (CLAP) is an open-source library that enables the training of a neural network on both Audio and Text descriptions, making it possible to search for Audio using a Text input. Several pre-trained models for song search are already available on huggingface

Goals

Evaluate how CLAP can be used for song searching and determine which types of queries yield the best results by developing a Minimum Viable Product (MVP) in Python. Based on the results of this MVP, future steps could include:

- Music Tagging;

- Free text search;

- Integration with an LLM (for example, with MCP or the OpenAI API) for music suggestions based on your own library.

The code for this project will be entirely written using AI to better explore and demonstrate AI capabilities.

Result

In this MVP we implemented:

- Async Song Analysis with Clap model

- Free Text Search of the songs

- Similar song search based on vector representation

- Containerised version with web interface

We also documented what went well and what can be improved in the use of AI.

You can have a look at the result here:

Future implementation can be related to performance improvement and stability of the analysis.

References

- CLAP: The main model being researched;

- huggingface: Pre-trained models for CLAP;

- Free Music Archive: Creative Commons songs that can be used for testing;

SUSE Observability MCP server by drutigliano

Description

The idea is to implement the SUSE Observability Model Context Protocol (MCP) Server as a specialized, middle-tier API designed to translate the complex, high-cardinality observability data from StackState (topology, metrics, and events) into highly structured, contextually rich, and LLM-ready snippets.

This MCP Server abstract the StackState APIs. Its primary function is to serve as a Tool/Function Calling target for AI agents. When an AI receives an alert or a user query (e.g., "What caused the outage?"), the AI calls an MCP Server endpoint. The server then fetches the relevant operational facts, summarizes them, normalizes technical identifiers (like URNs and raw metric names) into natural language concepts, and returns a concise JSON or YAML payload. This payload is then injected directly into the LLM's prompt, ensuring the final diagnosis or action is grounded in real-time, accurate SUSE Observability data, effectively minimizing hallucinations.

Goals

- Grounding AI Responses: Ensure that all AI diagnoses, root cause analyses, and action recommendations are strictly based on verifiable, real-time data retrieved from the SUSE Observability StackState platform.

- Simplifying Data Access: Abstract the complexity of StackState's native APIs (e.g., Time Travel, 4T Data Model) into simple, semantic functions that can be easily invoked by LLM tool-calling mechanisms.

- Data Normalization: Convert complex, technical identifiers (like component URNs, raw metric names, and proprietary health states) into standardized, natural language terms that an LLM can easily reason over.

- Enabling Automated Remediation: Define clear, action-oriented MCP endpoints (e.g., execute_runbook) that allow the AI agent to initiate automated operational workflows (e.g., restarts, scaling) after a diagnosis, closing the loop on observability.

Hackweek STEP

- Create a functional MCP endpoint exposing one (or more) tool(s) to answer queries like "What is the health of service X?") by fetching, normalizing, and returning live StackState data in an LLM-ready format.

Scope

- Implement read-only MCP server that can:

- Connect to a live SUSE Observability instance and authenticate (with API token)

- Use tools to fetch data for a specific component URN (e.g., current health state, metrics, possibly topology neighbors, ...).

- Normalize response fields (e.g., URN to "Service Name," health state DEVIATING to "Unhealthy", raw metrics).

- Return the data as a structured JSON payload compliant with the MCP specification.

Deliverables

- MCP Server v0.1 A running Golang MCP server with at least one tool.

- A README.md and a test script (e.g., curl commands or a simple notebook) showing how an AI agent would call the endpoint and the resulting JSON payload.

Outcome A functional and testable API endpoint that proves the core concept: translating complex StackState data into a simple, LLM-ready format. This provides the foundation for developing AI-driven diagnostics and automated remediation.

Resources

- https://www.honeycomb.io/blog/its-the-end-of-observability-as-we-know-it-and-i-feel-fine

- https://www.datadoghq.com/blog/datadog-remote-mcp-server

- https://modelcontextprotocol.io/specification/2025-06-18/index

- https://modelcontextprotocol.io/docs/develop/build-server

Basic implementation

- https://github.com/drutigliano19/suse-observability-mcp-server

Results

Successfully developed and delivered a fully functional SUSE Observability MCP Server that bridges language models with SUSE Observability's operational data. This project demonstrates how AI agents can perform intelligent troubleshooting and root cause analysis using structured access to real-time infrastructure data.

Example execution

AI-Powered Unit Test Automation for Agama by joseivanlopez

The Agama project is a multi-language Linux installer that leverages the distinct strengths of several key technologies:

- Rust: Used for the back-end services and the core HTTP API, providing performance and safety.

- TypeScript (React/PatternFly): Powers the modern web user interface (UI), ensuring a consistent and responsive user experience.

- Ruby: Integrates existing, robust YaST libraries (e.g.,

yast-storage-ng) to reuse established functionality.

The Problem: Testing Overhead

Developing and maintaining code across these three languages requires a significant, tedious effort in writing, reviewing, and updating unit tests for each component. This high cost of testing is a drain on developer resources and can slow down the project's evolution.

The Solution: AI-Driven Automation

This project aims to eliminate the manual overhead of unit testing by exploring and integrating AI-driven code generation tools. We will investigate how AI can:

- Automatically generate new unit tests as code is developed.

- Intelligently correct and update existing unit tests when the application code changes.

By automating this crucial but monotonous task, we can free developers to focus on feature implementation and significantly improve the speed and maintainability of the Agama codebase.

Goals

- Proof of Concept: Successfully integrate and demonstrate an authorized AI tool (e.g.,

gemini-cli) to automatically generate unit tests. - Workflow Integration: Define and document a new unit test automation workflow that seamlessly integrates the selected AI tool into the existing Agama development pipeline.

- Knowledge Sharing: Establish a set of best practices for using AI in code generation, sharing the learned expertise with the broader team.

Contribution & Resources

We are seeking contributors interested in AI-powered development and improving developer efficiency. Whether you have previous experience with code generation tools or are eager to learn, your participation is highly valuable.

If you want to dive deep into AI for software quality, please reach out and join the effort!

- Authorized AI Tools: Tools supported by SUSE (e.g.,

gemini-cli) - Focus Areas: Rust, TypeScript, and Ruby components within the Agama project.

Interesting Links