Description

The Uyuni server is provided as a container, but we still require it to run on Leap Micro? This is not how people expect to use containerized applications, so it would be great if we tested other host OSs and enabled them by providing builds of necessary tools for (e.g. mgradm). Interesting candidates should be:

- openSUSE Leap

- Cent OS 7

- Ubuntu

- ???

Goals

Make it really easy for anyone to run the Uyuni containerized server on whatever OS they want (with support for containers of course).

Looking for hackers with the skills:

This project is part of:

Hack Week 24

Activity

Comments

Be the first to comment!

Similar Projects

Uyuni Saltboot rework by oholecek

Description

When Uyuni switched over to the containerized proxies we had to abandon salt based saltboot infrastructure we had before. Uyuni already had integration with a Cobbler provisioning server and saltboot infra was re-implemented on top of this Cobbler integration.

What was not obvious from the start was that Cobbler, having all it's features, woefully slow when dealing with saltboot size environments. We did some improvements in performance, introduced transactions, and generally tried to make this setup usable. However the underlying slowness remained.

Goals

This project is not something trying to invent new things, it is just finally implementing saltboot infrastructure directly with the Uyuni server core.

Instead of generating grub and pxelinux configurations by Cobbler for all thousands of systems and branches, we will provide a GET access point to retrieve grub or pxelinux file during the boot:

/saltboot/group/grub/$fqdn and similar for systems /saltboot/system/grub/$mac

Next we adapt our tftpd translator to query these points when asked for default or mac based config.

Lastly similar thing needs to be done on our apache server when HTTP UEFI boot is used.

Resources

Uyuni read-only replica by cbosdonnat

Description

For now, there is no possible HA setup for Uyuni. The idea is to explore setting up a read-only shadow instance of an Uyuni and make it as useful as possible.

Possible things to look at:

- live sync of the database, probably using the WAL. Some of the tables may have to be skipped or some features disabled on the RO instance (taskomatic, PXT sessions…)

- Can we use a load balancer that routes read-only queries to either instance and the other to the RW one? For example, packages or PXE data can be served by both, the API GET requests too. The rest would be RW.

Goals

- Prepare a document explaining how to do it.

- PR with the needed code changes to support it

Flaky Tests AI Finder for Uyuni and MLM Test Suites by oscar-barrios

Description

Our current Grafana dashboards provide a great overview of test suite health, including a panel for "Top failed tests." However, identifying which of these failures are due to legitimate bugs versus intermittent "flaky tests" is a manual, time-consuming process. These flaky tests erode trust in our test suites and slow down development.

This project aims to build a simple but powerful Python script that automates flaky test detection. The script will directly query our Prometheus instance for the historical data of each failed test, using the jenkins_build_test_case_failure_age metric. It will then format this data and send it to the Gemini API with a carefully crafted prompt, asking it to identify which tests show a flaky pattern.

The final output will be a clean JSON list of the most probable flaky tests, which can then be used to populate a new "Top Flaky Tests" panel in our existing Grafana test suite dashboard.

Goals

By the end of Hack Week, we aim to have a single, working Python script that:

- Connects to Prometheus and executes a query to fetch detailed test failure history.

- Processes the raw data into a format suitable for the Gemini API.

- Successfully calls the Gemini API with the data and a clear prompt.

- Parses the AI's response to extract a simple list of flaky tests.

- Saves the list to a JSON file that can be displayed in Grafana.

- New panel in our Dashboard listing the Flaky tests

Resources

- Jenkins Prometheus Exporter: https://github.com/uyuni-project/jenkins-exporter/

- Data Source: Our internal Prometheus server.

- Key Metric:

jenkins_build_test_case_failure_age{jobname, buildid, suite, case, status, failedsince}. - Existing Query for Reference:

count by (suite) (max_over_time(jenkins_build_test_case_failure_age{status=~"FAILED|REGRESSION", jobname="$jobname"}[$__range])). - AI Model: The Google Gemini API.

- Example about how to interact with Gemini API: https://github.com/srbarrios/FailTale/

- Visualization: Our internal Grafana Dashboard.

- Internal IaC: https://gitlab.suse.de/galaxy/infrastructure/-/tree/master/srv/salt/monitoring

Outcome

- Jenkins Flaky Test Detector: https://github.com/srbarrios/jenkins-flaky-tests-detector and its container

- IaC on MLM Team: https://gitlab.suse.de/galaxy/infrastructure/-/tree/master/srv/salt/monitoring/jenkinsflakytestsdetector?reftype=heads, https://gitlab.suse.de/galaxy/infrastructure/-/blob/master/srv/salt/monitoring/grafana/dashboards/flaky-tests.json?ref_type=heads, and others.

- Grafana Dashboard: https://grafana.mgr.suse.de/d/flaky-tests/flaky-tests-detection @ @ text

Enable more features in mcp-server-uyuni by j_renner

Description

I would like to contribute to mcp-server-uyuni, the MCP server for Uyuni / Multi-Linux Manager) exposing additional features as tools. There is lots of relevant features to be found throughout the API, for example:

- System operations and infos

- System groups

- Maintenance windows

- Ansible

- Reporting

- ...

At the end of the week I managed to enable basic system group operations:

- List all system groups visible to the user

- Create new system groups

- List systems assigned to a group

- Add and remove systems from groups

Goals

- Set up test environment locally with the MCP server and client + a recent MLM server [DONE]

- Identify features and use cases offering a benefit with limited effort required for enablement [DONE]

- Create a PR to the repo [DONE]

Resources

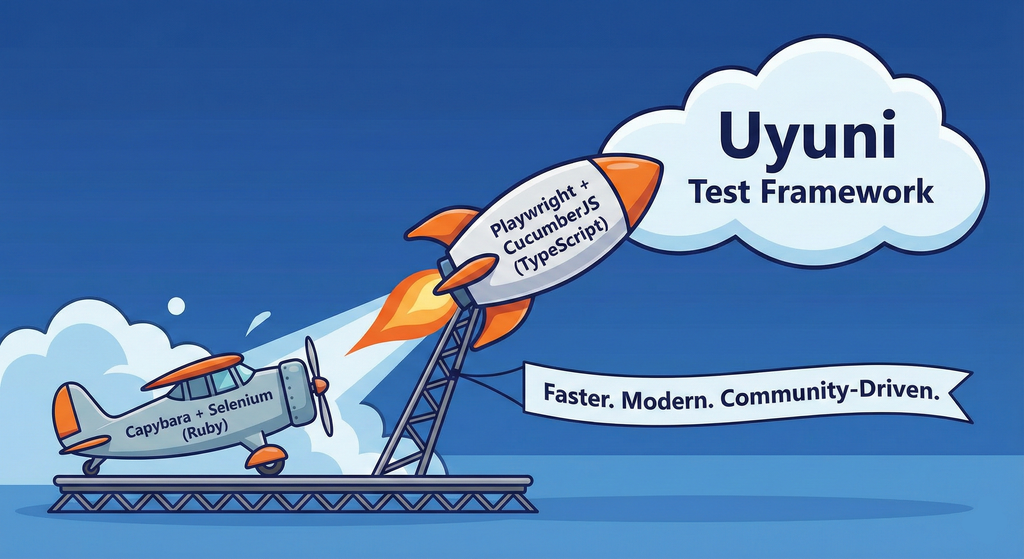

Move Uyuni Test Framework from Selenium to Playwright + AI by oscar-barrios

Description

This project aims to migrate the existing Uyuni Test Framework from Selenium to Playwright. The move will improve the stability, speed, and maintainability of our end-to-end tests by leveraging Playwright's modern features. We'll be rewriting the current Selenium code in Ruby to Playwright code in TypeScript, which includes updating the test framework runner, step definitions, and configurations. This is also necessary because we're moving from Cucumber Ruby to CucumberJS.

If you're still curious about the AI in the title, it was just a way to grab your attention. Thanks for your understanding.

Nah, let's be honest ![]() AI helped a lot to vibe code a good part of the Ruby methods of the Test framework, moving them to Typescript, along with the migration from Capybara to Playwright. I've been using "Cline" as plugin for WebStorm IDE, using Gemini API behind it.

AI helped a lot to vibe code a good part of the Ruby methods of the Test framework, moving them to Typescript, along with the migration from Capybara to Playwright. I've been using "Cline" as plugin for WebStorm IDE, using Gemini API behind it.

Goals

- Migrate Core tests including Onboarding of clients

- Improve test reliabillity: Measure and confirm a significant reduction of flakiness.

- Implement a robust framework: Establish a well-structured and reusable Playwright test framework using the CucumberJS

Resources

- Existing Uyuni Test Framework (Cucumber Ruby + Capybara + Selenium)

- My Template for CucumberJS + Playwright in TypeScript

- Started Hackweek Project

Technical talks at universities by agamez

Description

This project aims to empower the next generation of tech professionals by offering hands-on workshops on containerization and Kubernetes, with a strong focus on open-source technologies. By providing practical experience with these cutting-edge tools and fostering a deep understanding of open-source principles, we aim to bridge the gap between academia and industry.

For now, the scope is limited to Spanish universities, since we already have the contacts and have started some conversations.

Goals

- Technical Skill Development: equip students with the fundamental knowledge and skills to build, deploy, and manage containerized applications using open-source tools like Kubernetes.

- Open-Source Mindset: foster a passion for open-source software, encouraging students to contribute to open-source projects and collaborate with the global developer community.

- Career Readiness: prepare students for industry-relevant roles by exposing them to real-world use cases, best practices, and open-source in companies.

Resources

- Instructors: experienced open-source professionals with deep knowledge of containerization and Kubernetes.

- SUSE Expertise: leverage SUSE's expertise in open-source technologies to provide insights into industry trends and best practices.

Port the classic browser game HackTheNet to PHP 8 by dgedon

Description

The classic browser game HackTheNet from 2004 still runs on PHP 4/5 and MySQL 5 and needs a port to PHP 8 and e.g. MariaDB.

Goals

- Port the game to PHP 8 and MariaDB 11

- Create a container where the game server can simply be started/stopped

Resources

- https://github.com/nodeg/hackthenet

Help Create A Chat Control Resistant Turnkey Chatmail/Deltachat Relay Stack - Rootless Podman Compose, OpenSUSE BCI, Hardened, & SELinux by 3nd5h1771fy

Description

The Mission: Decentralized & Sovereign Messaging

FYI: If you have never heard of "Chatmail", you can visit their site here, but simply put it can be thought of as the underlying protocol/platform decentralized messengers like DeltaChat use for their communications. Do not confuse it with the honeypot looking non-opensource paid for prodect with better seo that directs you to chatmailsecure(dot)com

In an era of increasing centralized surveillance by unaccountable bad actors (aka BigTech), "Chat Control," and the erosion of digital privacy, the need for sovereign communication infrastructure is critical. Chatmail is a pioneering initiative that bridges the gap between classic email and modern instant messaging, offering metadata-minimized, end-to-end encrypted (E2EE) communication that is interoperable and open.

However, unless you are a seasoned sysadmin, the current recommended deployment method of a Chatmail relay is rigid, fragile, difficult to properly secure, and effectively takes over the entire host the "relay" is deployed on.

Why This Matters

A simple, host agnostic, reproducible deployment lowers the entry cost for anyone wanting to run a privacy‑preserving, decentralized messaging relay. In an era of perpetually resurrected chat‑control legislation threats, EU digital‑sovereignty drives, and many dangers of using big‑tech messaging platforms (Apple iMessage, WhatsApp, FB Messenger, Instagram, SMS, Google Messages, etc...) for any type of communication, providing an easy‑to‑use alternative empowers:

- Censorship resistance - No single entity controls the relay; operators can spin up new nodes quickly.

- Surveillance mitigation - End‑to‑end OpenPGP encryption ensures relay operators never see plaintext.

- Digital sovereignty - Communities can host their own infrastructure under local jurisdiction, aligning with national data‑policy goals.

By turning the Chatmail relay into a plug‑and‑play container stack, we enable broader adoption, foster a resilient messaging fabric, and give developers, activists, and hobbyists a concrete tool to defend privacy online.

Goals

As I indicated earlier, this project aims to drastically simplify the deployment of Chatmail relay. By converting this architecture into a portable, containerized stack using Podman and OpenSUSE base container images, we can allow anyone to deploy their own censorship-resistant, privacy-preserving communications node in minutes.

Our goal for Hack Week: package every component into containers built on openSUSE/MicroOS base images, initially orchestrated with a single container-compose.yml (podman-compose compatible). The stack will:

- Run on any host that supports Podman (including optimizations and enhancements for SELinux‑enabled systems).

- Allow network decoupling by refactoring configurations to move from file-system constrained Unix sockets to internal TCP networking, allowing containers achieve stricter isolation.

- Utilize Enhanced Security with SELinux by using purpose built utilities such as udica we can quickly generate custom SELinux policies for the container stack, ensuring strict confinement superior to standard/typical Docker deployments.

- Allow the use of bind or remote mounted volumes for shared data (

/var/vmail, DKIM keys, TLS certs, etc.). - Replace the local DNS server requirement with a remote DNS‑provider API for DKIM/TXT record publishing.

By delivering a turnkey, host agnostic, reproducible deployment, we lower the barrier for individuals and small communities to launch their own chatmail relays, fostering a decentralized, censorship‑resistant messaging ecosystem that can serve DeltaChat users and/or future services adopting this protocol

Resources

- The links included above

- https://chatmail.at/doc/relay/

- https://delta.chat/en/help

- Project repo -> https://codeberg.org/EndShittification/containerized-chatmail-relay

Rewrite Distrobox in go (POC) by fabriziosestito

Description

Rewriting Distrobox in Go.

Main benefits:

- Easier to maintain and to test

- Adapter pattern for different container backends (LXC, systemd-nspawn, etc.)

Goals

- Build a minimal starting point with core commands

- Keep the CLI interface compatible: existing users shouldn't notice any difference

- Use a clean Go architecture with adapters for different container backends

- Keep dependencies minimal and binary size small

- Benchmark against the original shell script

Resources

- Upstream project: https://github.com/89luca89/distrobox/

- Distrobox site: https://distrobox.it/

- ArchWiki: https://wiki.archlinux.org/title/Distrobox