Project Description

At SCC, we have a rotating task of COOTW (Commanding Office of the Week). This task involves responding to customer requests from jira and slack help channels, monitoring production systems and doing small chores. Usually, we have documentation to help the COOTW answer questions and quickly find fixes. Most of these are distributed across github, trello and SUSE Support documentation. The aim of this project is to explore the magic of LLMs and create a conversational bot.

Goal for this Hackweek

- Build data ingestion

Data source:

- SUSE KB docs

- scc github docs

- scc trello knowledge board

Test out new RAG architecture

https://gitlab.suse.de/ngetahun/cootwbot

This project is part of:

Hack Week 23 Hack Week 24

Activity

Comments

Be the first to comment!

Similar Projects

Liz - Prompt autocomplete by ftorchia

Description

Liz is the Rancher AI assistant for cluster operations.

Goals

We want to help users when sending new messages to Liz, by adding an autocomplete feature to complete their requests based on the context.

Example:

- User prompt: "Can you show me the list of p"

- Autocomplete suggestion: "Can you show me the list of p...od in local cluster?"

Example:

- User prompt: "Show me the logs of #rancher-"

- Chat console: It shows a drop-down widget, next to the # character, with the list of available pod names starting with "rancher-".

Technical Overview

- The AI agent should expose a new ws/autocomplete endpoint to proxy autocomplete messages to the LLM.

- The UI extension should be able to display prompt suggestions and allow users to apply the autocomplete to the Prompt via keyboard shortcuts.

Resources

Uyuni Health-check Grafana AI Troubleshooter by ygutierrez

Description

This project explores the feasibility of using the open-source Grafana LLM plugin to enhance the Uyuni Health-check tool with LLM capabilities. The idea is to integrate a chat-based "AI Troubleshooter" directly into existing dashboards, allowing users to ask natural-language questions about errors, anomalies, or performance issues.

Goals

- Investigate if and how the

grafana-llm-appplug-in can be used within the Uyuni Health-check tool. - Investigate if this plug-in can be used to query LLMs for troubleshooting scenarios.

- Evaluate support for local LLMs and external APIs through the plugin.

- Evaluate if and how the Uyuni MCP server could be integrated as another source of information.

Resources

The Agentic Rancher Experiment: Do Androids Dream of Electric Cattle? by moio

Rancher is a beast of a codebase. Let's investigate if the new 2025 generation of GitHub Autonomous Coding Agents and Copilot Workspaces can actually tame it.

The Plan

Create a sandbox GitHub Organization, clone in key Rancher repositories, and let the AI loose to see if it can handle real-world enterprise OSS maintenance - or if it just hallucinates new breeds of Kubernetes resources!

Specifically, throw "Agentic Coders" some typical tasks in a complex, long-lived open-source project, such as:

❥ The Grunt Work: generate missing GoDocs, unit tests, and refactorings. Rebase PRs.

❥ The Complex Stuff: fix actual (historical) bugs and feature requests to see if they can traverse the complexity without (too much) human hand-holding.

❥ Hunting Down Gaps: find areas lacking in docs, areas of improvement in code, dependency bumps, and so on.

If time allows, also experiment with Model Context Protocol (MCP) to give agents context on our specific build pipelines and CI/CD logs.

Why?

We know AI can write "Hello World." and also moderately complex programs from a green field. But can it rebase a 3-month-old PR with conflicts in rancher/rancher? I want to find the breaking point of current AI agents to determine if and how they can help us to reduce our technical debt, work faster and better. At the same time, find out about pitfalls and shortcomings.

The CONCLUSION!!!

A ![]() State of the Union

State of the Union ![]() document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below!

document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below! ![]()

Kubernetes-Based ML Lifecycle Automation by lmiranda

Description

This project aims to build a complete end-to-end Machine Learning pipeline running entirely on Kubernetes, using Go, and containerized ML components.

The pipeline will automate the lifecycle of a machine learning model, including:

- Data ingestion/collection

- Model training as a Kubernetes Job

- Model artifact storage in an S3-compatible registry (e.g. Minio)

- A Go-based deployment controller that automatically deploys new model versions to Kubernetes using Rancher

- A lightweight inference service that loads and serves the latest model

- Monitoring of model performance and service health through Prometheus/Grafana

The outcome is a working prototype of an MLOps workflow that demonstrates how AI workloads can be trained, versioned, deployed, and monitored using the Kubernetes ecosystem.

Goals

By the end of Hack Week, the project should:

Produce a fully functional ML pipeline running on Kubernetes with:

- Data collection job

- Training job container

- Storage and versioning of trained models

- Automated deployment of new model versions

- Model inference API service

- Basic monitoring dashboards

Showcase a Go-based deployment automation component, which scans the model registry and automatically generates & applies Kubernetes manifests for new model versions.

Enable continuous improvement by making the system modular and extensible (e.g., additional models, metrics, autoscaling, or drift detection can be added later).

Prepare a short demo explaining the end-to-end process and how new models flow through the system.

Resources

Updates

- Training pipeline and datasets

- Inference Service py

Background Coding Agent by mmanno

Description

I had only bad experiences with AI one-shots. However, monitoring agent work closely and interfering often did result in productivity gains.

Now, other companies are using agents in pipelines. That makes sense to me, just like CI, we want to offload work to pipelines: Our engineering teams are consistently slowed down by "toil": low-impact, repetitive maintenance tasks. A simple linter rule change, a dependency bump, rebasing patch-sets on top of newer releases or API deprecation requires dozens of manual PRs, draining time from feature development.

So far we have been writing deterministic, script-based automation for these tasks. And it turns out to be a common trap. These scripts are brittle, complex, and become a massive maintenance burden themselves.

Can we make prompts and workflows smart enough to succeed at background coding?

Goals

We will build a platform that allows engineers to execute complex code transformations using prompts.

By automating this toil, we accelerate large-scale migrations and allow teams to focus on high-value work.

Our platform will consist of three main components:

- "Change" Definition: Engineers will define a transformation as a simple, declarative manifest:

- The target repositories.

- A wrapper to run a "coding agent", e.g., "gemini-cli".

- The task as a natural language prompt.

- The target repositories.

- "Change" Management Service: A central service that orchestrates the jobs. It will receive Change definitions and be responsible for the job lifecycle.

- Execution Runners: We could use existing sandboxed CI runners (like GitHub/GitLab runners) to execute each job or spawn a container.

MVP

- Define the Change manifest format.

- Build the core Management Service that can accept and queue a Change.

- Connect management service and runners, dynamically dispatch jobs to runners.

- Create a basic runner script that can run a hard-coded prompt against a test repo and open a PR.

Stretch Goals:

- Multi-layered approach, Workflow Agents trigger Coding Agents:

- Workflow Agent: Gather information about the task interactively from the user.

- Coding Agent: Once the interactive agent has refined the task into a clear prompt, it hands this prompt off to the "coding agent." This background agent is responsible for executing the task and producing the actual pull request.

- Workflow Agent: Gather information about the task interactively from the user.

- Use MCP:

- Workflow Agent gathers context information from Slack, Github, etc.

- Workflow Agent triggers a Coding Agent.

- Workflow Agent gathers context information from Slack, Github, etc.

- Create a "Standard Task" library with reliable prompts.

- Rebasing rancher-monitoring to a new version of kube-prom-stack

- Update charts to use new images

- Apply changes to comply with a new linter

- Bump complex Go dependencies, like k8s modules

- Backport pull requests to other branches

- Rebasing rancher-monitoring to a new version of kube-prom-stack

- Add “review agents” that review the generated PR.

See also

Bugzilla goes AI - Phase 1 by nwalter

Description

This project, Bugzilla goes AI, aims to boost developer productivity by creating an autonomous AI bug agent during Hackweek. The primary goal is to reduce the time employees spend triaging bugs by integrating Ollama to summarize issues, recommend next steps, and push focused daily reports to a Web Interface.

Goals

To reduce employee time spent on Bugzilla by implementing an AI tool that triages and summarizes bug reports, providing actionable recommendations to the team via Web Interface.

Project Charter

Description

Project Achievements during Hackweek

In this file you can read about what we achieved during Hackweek.

Creating test suite using LLM on existing codebase of a solar router by fcrozat

Description

Two years ago, I evaluated solar routers as part of hackweek24, I've assembled one and it is running almost smoothly.

However, its code quality is not perfect and the codebase doesn't have any testcase (which is tricky, since it is embedded code and rely on getting external data to react).

Before improving the code itself, a testsuite should be created to ensure code additional don't cause regression.

Goals

Create a testsuite, allowing to test solar router code in a virtual environment. Using LLM to help to create this test suite.

If succesful, try to improve the codebase itself by having it reviewed by LLM.

Resources

Backporting patches using LLM by jankara

Description

Backporting Linux kernel fixes (either for CVE issues or as part of general git-fixes workflow) is boring and mostly mechanical work (dealing with changes in context, renamed variables, new helper functions etc.). The idea of this project is to explore usage of LLM for backporting Linux kernel commits to SUSE kernels using LLM.

Goals

- Create safe environment allowing LLM to run and backport patches without exposing the whole filesystem to it (for privacy and security reasons).

- Write prompt that will guide LLM through the backporting process. Fine tune it based on experimental results.

- Explore success rate of LLMs when backporting various patches.

Resources

- Docker

- Gemini CLI

Repository

Current version of the container with some instructions for use are at: https://gitlab.suse.de/jankara/gemini-cli-backporter

Song Search with CLAP by gcolangiuli

Description

Contrastive Language-Audio Pretraining (CLAP) is an open-source library that enables the training of a neural network on both Audio and Text descriptions, making it possible to search for Audio using a Text input. Several pre-trained models for song search are already available on huggingface

Goals

Evaluate how CLAP can be used for song searching and determine which types of queries yield the best results by developing a Minimum Viable Product (MVP) in Python. Based on the results of this MVP, future steps could include:

- Music Tagging;

- Free text search;

- Integration with an LLM (for example, with MCP or the OpenAI API) for music suggestions based on your own library.

The code for this project will be entirely written using AI to better explore and demonstrate AI capabilities.

Result

In this MVP we implemented:

- Async Song Analysis with Clap model

- Free Text Search of the songs

- Similar song search based on vector representation

- Containerised version with web interface

We also documented what went well and what can be improved in the use of AI.

You can have a look at the result here:

Future implementation can be related to performance improvement and stability of the analysis.

References

- CLAP: The main model being researched;

- huggingface: Pre-trained models for CLAP;

- Free Music Archive: Creative Commons songs that can be used for testing;

Explore LLM evaluation metrics by thbertoldi

Description

Learn the best practices for evaluating LLM performance with an open-source framework such as DeepEval.

Goals

Curate the knowledge learned during practice and present it to colleagues.

-> Maybe publish a blog post on SUSE's blog?

Resources

https://deepeval.com

https://docs.pactflow.io/docs/bi-directional-contract-testing

Kubernetes-Based ML Lifecycle Automation by lmiranda

Description

This project aims to build a complete end-to-end Machine Learning pipeline running entirely on Kubernetes, using Go, and containerized ML components.

The pipeline will automate the lifecycle of a machine learning model, including:

- Data ingestion/collection

- Model training as a Kubernetes Job

- Model artifact storage in an S3-compatible registry (e.g. Minio)

- A Go-based deployment controller that automatically deploys new model versions to Kubernetes using Rancher

- A lightweight inference service that loads and serves the latest model

- Monitoring of model performance and service health through Prometheus/Grafana

The outcome is a working prototype of an MLOps workflow that demonstrates how AI workloads can be trained, versioned, deployed, and monitored using the Kubernetes ecosystem.

Goals

By the end of Hack Week, the project should:

Produce a fully functional ML pipeline running on Kubernetes with:

- Data collection job

- Training job container

- Storage and versioning of trained models

- Automated deployment of new model versions

- Model inference API service

- Basic monitoring dashboards

Showcase a Go-based deployment automation component, which scans the model registry and automatically generates & applies Kubernetes manifests for new model versions.

Enable continuous improvement by making the system modular and extensible (e.g., additional models, metrics, autoscaling, or drift detection can be added later).

Prepare a short demo explaining the end-to-end process and how new models flow through the system.

Resources

Updates

- Training pipeline and datasets

- Inference Service py

MCP Server for SCC by digitaltomm

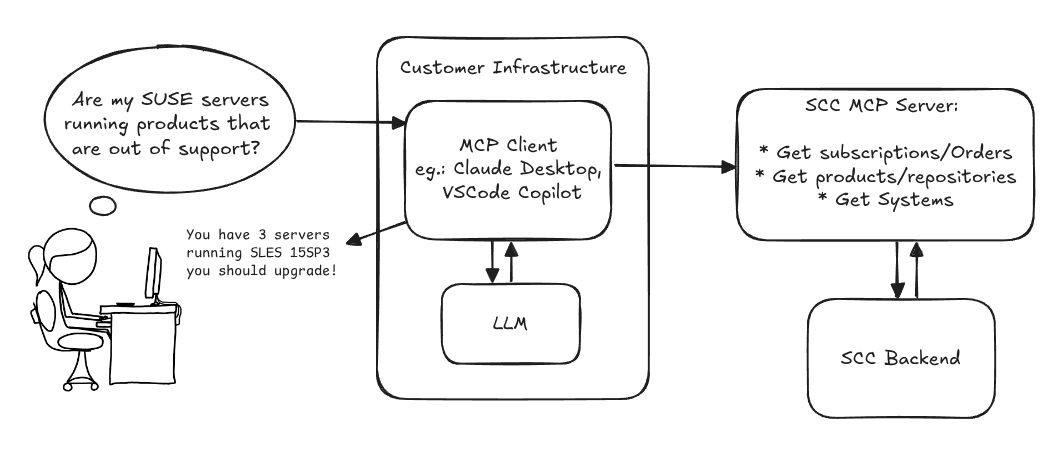

Description

Provide an MCP Server implementation for customers to access data on scc.suse.com via MCP protocol. The core benefit of this MCP interface is that it has direct (read) access to customer data in SCC, so the AI agent gets enhanced knowledge about individual customer data, like subscriptions, orders and registered systems.

Architecture

Goals

We want to demonstrate a proof of concept to connect to the SCC MCP server with any AI agent, for example gemini-cli or codex. Enabling the user to ask questions regarding their SCC inventory.

For this Hackweek, we target that users get proper responses to these example questions:

- Which of my currently active systems are running products that are out of support?

- Do I have ready to use registration codes for SLES?

- What are the latest 5 released patches for SLES 15 SP6? Output as a list with release date, patch name, affected package names and fixed CVEs.

- Which versions of kernel-default are available on SLES 15 SP6?

Technical Notes

Similar to the organization APIs, this can expose to customers data about their subscriptions, orders, systems and products. Authentication should be done by organization credentials, similar to what needs to be provided to RMT/MLM. Customers can connect to the SCC MCP server from their own MCP-compatible client and Large Language Model (LLM), so no third party is involved.

Milestones

[x] Basic MCP API setup MCP endpoints [x] Products / Repositories [x] Subscriptions / Orders [x] Systems [x] Packages [x] Document usage with Gemini CLI, Codex

Resources

Gemini CLI setup:

~/.gemini/settings.json: