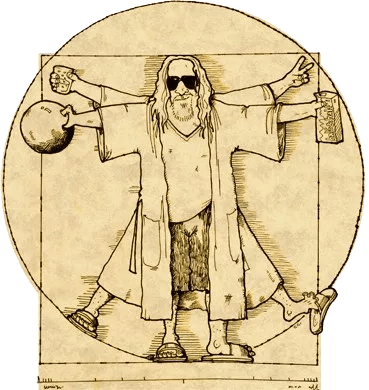

The most relaxed testing framework of Kubernetes in the world

Repo: GitHub

Dudelopers abide!

Come join the most relaxed testing framework of Kubernetes in the world – Dudenetes. If you’d like to find continuous peace on Github and enjoy bowling in production, man, we’ll help you get started. Right after a little nap.

You shouldn’t try too hard to enjoy working with Kubernetes. Enjoying working with Kubernetes is relatively easy if you just take it easy and scale with the flow. It’s not all about sprints, achievements and success. It’s about applying basic common sense, speaking English for telling stories, and not being worried about how other creeps roll at you. After all, well, it’s just their opinion, man.

The beauty of Dudenetes framework is its simplicity.

> Once you write code for testing code, it gets too complex and everything can go wrong.

The Kubernetes e2e testing framework is hard and complicated and nobody knows what to do about it. So don’t do anything about it. Just take it easy, man. Kick back with some friends and oat soda and if the goddamn control-plane crashes into the mountain, just mark it zero and don’t go over the line – that is to say, abide. And then, when nobody’s calling, let’s go find some good burgers, dude.

Take that hill and be a good fellow dudeloper! That means sharing your stories and use godog to map them with kubectl commands.

See you further on up the trail,

> There's 106 miles to Chicago, we've got a full tank of gas, half a pack of cigarettes, it's dark out, and we're wearing sunglasses. Hit it!

Thankie

What is this?

The combination of godog and kubectl. People who are using this project they are called Dudelopers

Disclaimer

Dudenetes is a testing framework for Kubernetes with the philosophy, or lifestyle inspired by "The Dude", the protagonist of the Coen Brothers' 1998 film The Big Lebowski.

This project is part of:

Hack Week 18

Activity

Similar Projects

Q2Boot - A handy QEMU VM launcher by amanzini

Description

Q2Boot (Qemu Quick Boot) is a command-line tool that wraps QEMU to provide a streamlined experience for launching virtual machines. It automatically configures common settings like KVM acceleration, virtio drivers, and networking while allowing customization through both configuration files and command-line options.

The project originally was a personal utility in D, now recently rewritten in idiomatic Go. It lives at repository https://github.com/ilmanzo/q2boot

Goals

Improve the project, testing with different scenarios , address issues and propose new features. It will benefit of some basic integration testing by providing small sample disk images.

Updates

- Dec 1, 2025 : refactor command line options, added structured logging. Released v0.0.2

- Dec 2, 2025 : added external monitor via telnet option

- Dec 4, 2025 : released v0.0.3 with architecture auto-detection

- Dec 5, 2025 : filing new issues and general polishment. Designing E2E testing

Resources

A CLI for Harvester by mohamed.belgaied

Harvester does not officially come with a CLI tool, the user is supposed to interact with Harvester mostly through the UI. Though it is theoretically possible to use kubectl to interact with Harvester, the manipulation of Kubevirt YAML objects is absolutely not user friendly. Inspired by tools like multipass from Canonical to easily and rapidly create one of multiple VMs, I began the development of Harvester CLI. Currently, it works but Harvester CLI needs some love to be up-to-date with Harvester v1.0.2 and needs some bug fixes and improvements as well.

Project Description

Harvester CLI is a command line interface tool written in Go, designed to simplify interfacing with a Harvester cluster as a user. It is especially useful for testing purposes as you can easily and rapidly create VMs in Harvester by providing a simple command such as:

harvester vm create my-vm --count 5

to create 5 VMs named my-vm-01 to my-vm-05.

Harvester CLI is functional but needs a number of improvements: up-to-date functionality with Harvester v1.0.2 (some minor issues right now), modifying the default behaviour to create an opensuse VM instead of an ubuntu VM, solve some bugs, etc.

Github Repo for Harvester CLI: https://github.com/belgaied2/harvester-cli

Done in previous Hackweeks

- Create a Github actions pipeline to automatically integrate Harvester CLI to Homebrew repositories: DONE

- Automatically package Harvester CLI for OpenSUSE / Redhat RPMs or DEBs: DONE

Goal for this Hackweek

The goal for this Hackweek is to bring Harvester CLI up-to-speed with latest Harvester versions (v1.3.X and v1.4.X), and improve the code quality as well as implement some simple features and bug fixes.

Some nice additions might be: * Improve handling of namespaced objects * Add features, such as network management or Load Balancer creation ? * Add more unit tests and, why not, e2e tests * Improve CI * Improve the overall code quality * Test the program and create issues for it

Issue list is here: https://github.com/belgaied2/harvester-cli/issues

Resources

The project is written in Go, and using client-go the Kubernetes Go Client libraries to communicate with the Harvester API (which is Kubernetes in fact).

Welcome contributions are:

- Testing it and creating issues

- Documentation

- Go code improvement

What you might learn

Harvester CLI might be interesting to you if you want to learn more about:

- GitHub Actions

- Harvester as a SUSE Product

- Go programming language

- Kubernetes API

- Kubevirt API objects (Manipulating VMs and VM Configuration in Kubernetes using Kubevirt)

Rewrite Distrobox in go (POC) by fabriziosestito

Description

Rewriting Distrobox in Go.

Main benefits:

- Easier to maintain and to test

- Adapter pattern for different container backends (LXC, systemd-nspawn, etc.)

Goals

- Build a minimal starting point with core commands

- Keep the CLI interface compatible: existing users shouldn't notice any difference

- Use a clean Go architecture with adapters for different container backends

- Keep dependencies minimal and binary size small

- Benchmark against the original shell script

Resources

- Upstream project: https://github.com/89luca89/distrobox/

- Distrobox site: https://distrobox.it/

- ArchWiki: https://wiki.archlinux.org/title/Distrobox

Create a go module to wrap happy-compta.fr by cbosdonnat

Description

https://happy-compta.fr is a tool for french work councils simple book keeping. While it does the job, it has no API to work with and it is tedious to enter loads of operations.

Goals

Write a go client module to be used as an API to programmatically manipulate the tool.

Writing an example tool to load data from a CSV file would be good too.

Updatecli Autodiscovery supporting WASM plugins by olblak

Description

Updatecli is a Golang Update policy engine that allow to write Update policies in YAML manifest. Updatecli already has a plugin ecosystem for common update strategies such as automating Dockerfile or Kubernetes manifest from Git repositories.

This is what we call autodiscovery where Updatecli generate manifest and apply them dynamically based on some context.

Obviously, the Updatecli project doesn't accept plugins specific to an organization.

I saw project using different languages such as python, C#, or JS to generate those manifest.

It would be great to be able to share and reuse those specific plugins

During the HackWeek, I'll hang on the Updatecli matrix channel

https://matrix.to/#/#Updatecli_community:gitter.im

Goals

Implement autodiscovery plugins using WASM. I am planning to experiment with https://github.com/extism/extism

To build a simple WASM autodiscovery plugin and run it from Updatecli

Resources

- https://github.com/extism/extism

- https://github.com/updatecli/updatecli

- https://www.updatecli.io/docs/core/autodiscovery/

- https://matrix.to/#/#Updatecli_community:gitter.im

A CLI for Harvester by mohamed.belgaied

Harvester does not officially come with a CLI tool, the user is supposed to interact with Harvester mostly through the UI. Though it is theoretically possible to use kubectl to interact with Harvester, the manipulation of Kubevirt YAML objects is absolutely not user friendly. Inspired by tools like multipass from Canonical to easily and rapidly create one of multiple VMs, I began the development of Harvester CLI. Currently, it works but Harvester CLI needs some love to be up-to-date with Harvester v1.0.2 and needs some bug fixes and improvements as well.

Project Description

Harvester CLI is a command line interface tool written in Go, designed to simplify interfacing with a Harvester cluster as a user. It is especially useful for testing purposes as you can easily and rapidly create VMs in Harvester by providing a simple command such as:

harvester vm create my-vm --count 5

to create 5 VMs named my-vm-01 to my-vm-05.

Harvester CLI is functional but needs a number of improvements: up-to-date functionality with Harvester v1.0.2 (some minor issues right now), modifying the default behaviour to create an opensuse VM instead of an ubuntu VM, solve some bugs, etc.

Github Repo for Harvester CLI: https://github.com/belgaied2/harvester-cli

Done in previous Hackweeks

- Create a Github actions pipeline to automatically integrate Harvester CLI to Homebrew repositories: DONE

- Automatically package Harvester CLI for OpenSUSE / Redhat RPMs or DEBs: DONE

Goal for this Hackweek

The goal for this Hackweek is to bring Harvester CLI up-to-speed with latest Harvester versions (v1.3.X and v1.4.X), and improve the code quality as well as implement some simple features and bug fixes.

Some nice additions might be: * Improve handling of namespaced objects * Add features, such as network management or Load Balancer creation ? * Add more unit tests and, why not, e2e tests * Improve CI * Improve the overall code quality * Test the program and create issues for it

Issue list is here: https://github.com/belgaied2/harvester-cli/issues

Resources

The project is written in Go, and using client-go the Kubernetes Go Client libraries to communicate with the Harvester API (which is Kubernetes in fact).

Welcome contributions are:

- Testing it and creating issues

- Documentation

- Go code improvement

What you might learn

Harvester CLI might be interesting to you if you want to learn more about:

- GitHub Actions

- Harvester as a SUSE Product

- Go programming language

- Kubernetes API

- Kubevirt API objects (Manipulating VMs and VM Configuration in Kubernetes using Kubevirt)

Preparing KubeVirtBMC for project transfer to the KubeVirt organization by zchang

Description

KubeVirtBMC is preparing to transfer the project to the KubeVirt organization. One requirement is to enhance the modeling design's security. The current v1alpha1 API (the VirtualMachineBMC CRD) was designed during the proof-of-concept stage. It's immature and inherently insecure due to its cross-namespace object references, exposing security concerns from an RBAC perspective.

The other long-awaited feature is the ability to mount virtual media so that virtual machines can boot from remote ISO images.

Goals

- Deliver the v1beta1 API and its corresponding controller implementation

- Enable the Redfish virtual media mount function for KubeVirt virtual machines

Resources

- The KubeVirtBMC repo: https://github.com/starbops/kubevirtbmc

- The new v1beta1 API: https://github.com/starbops/kubevirtbmc/issues/83

- Redfish virtual media mount: https://github.com/starbops/kubevirtbmc/issues/44

The Agentic Rancher Experiment: Do Androids Dream of Electric Cattle? by moio

Rancher is a beast of a codebase. Let's investigate if the new 2025 generation of GitHub Autonomous Coding Agents and Copilot Workspaces can actually tame it.

The Plan

Create a sandbox GitHub Organization, clone in key Rancher repositories, and let the AI loose to see if it can handle real-world enterprise OSS maintenance - or if it just hallucinates new breeds of Kubernetes resources!

Specifically, throw "Agentic Coders" some typical tasks in a complex, long-lived open-source project, such as:

❥ The Grunt Work: generate missing GoDocs, unit tests, and refactorings. Rebase PRs.

❥ The Complex Stuff: fix actual (historical) bugs and feature requests to see if they can traverse the complexity without (too much) human hand-holding.

❥ Hunting Down Gaps: find areas lacking in docs, areas of improvement in code, dependency bumps, and so on.

If time allows, also experiment with Model Context Protocol (MCP) to give agents context on our specific build pipelines and CI/CD logs.

Why?

We know AI can write "Hello World." and also moderately complex programs from a green field. But can it rebase a 3-month-old PR with conflicts in rancher/rancher? I want to find the breaking point of current AI agents to determine if and how they can help us to reduce our technical debt, work faster and better. At the same time, find out about pitfalls and shortcomings.

The CONCLUSION!!!

A ![]() State of the Union

State of the Union ![]() document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below!

document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below! ![]()

Rancher/k8s Trouble-Maker by tonyhansen

Project Description

When studying for my RHCSA, I found trouble-maker, which is a program that breaks a Linux OS and requires you to fix it. I want to create something similar for Rancher/k8s that can allow for troubleshooting an unknown environment.

Goals for Hackweek 25

- Update to modern Rancher and verify that existing tests still work

- Change testing logic to populate secrets instead of requiring a secondary script

- Add new tests

Goals for Hackweek 24 (Complete)

- Create a basic framework for creating Rancher/k8s cluster lab environments as needed for the Break/Fix

- Create at least 5 modules that can be applied to the cluster and require troubleshooting

Resources

- https://github.com/celidon/rancher-troublemaker

- https://github.com/rancher/terraform-provider-rancher2

- https://github.com/rancher/tf-rancher-up

- https://github.com/rancher/quickstart

Exploring Modern AI Trends and Kubernetes-Based AI Infrastructure by jluo

Description

Build a solid understanding of the current landscape of Artificial Intelligence and how modern cloud-native technologies—especially Kubernetes—support AI workloads.

Goals

Use Gemini Learning Mode to guide the exploration, surface relevant concepts, and structure the learning journey:

- Gain insight into the latest AI trends, tools, and architectural concepts.

- Understand how Kubernetes and related cloud-native technologies are used in the AI ecosystem (model training, deployment, orchestration, MLOps).

Resources

Red Hat AI Topic Articles

- https://www.redhat.com/en/topics/ai

Kubeflow Documentation

- https://www.kubeflow.org/docs/

Q4 2025 CNCF Technology Landscape Radar report:

- https://www.cncf.io/announcements/2025/11/11/cncf-and-slashdata-report-finds-leading-ai-tools-gaining-adoption-in-cloud-native-ecosystems/

- https://www.cncf.io/wp-content/uploads/2025/11/cncfreporttechradar_111025a.pdf

Agent-to-Agent (A2A) Protocol

- https://developers.googleblog.com/en/a2a-a-new-era-of-agent-interoperability/

Testing and adding GNU/Linux distributions on Uyuni by juliogonzalezgil

Join the Gitter channel! https://gitter.im/uyuni-project/hackweek

Uyuni is a configuration and infrastructure management tool that saves you time and headaches when you have to manage and update tens, hundreds or even thousands of machines. It also manages configuration, can run audits, build image containers, monitor and much more!

Currently there are a few distributions that are completely untested on Uyuni or SUSE Manager (AFAIK) or just not tested since a long time, and could be interesting knowing how hard would be working with them and, if possible, fix whatever is broken.

For newcomers, the easiest distributions are those based on DEB or RPM packages. Distributions with other package formats are doable, but will require adapting the Python and Java code to be able to sync and analyze such packages (and if salt does not support those packages, it will need changes as well). So if you want a distribution with other packages, make sure you are comfortable handling such changes.

No developer experience? No worries! We had non-developers contributors in the past, and we are ready to help as long as you are willing to learn. If you don't want to code at all, you can also help us preparing the documentation after someone else has the initial code ready, or you could also help with testing :-)

The idea is testing Salt (including bootstrapping with bootstrap script) and Salt-ssh clients

To consider that a distribution has basic support, we should cover at least (points 3-6 are to be tested for both salt minions and salt ssh minions):

- Reposync (this will require using spacewalk-common-channels and adding channels to the .ini file)

- Onboarding (salt minion from UI, salt minion from bootstrap scritp, and salt-ssh minion) (this will probably require adding OS to the bootstrap repository creator)

- Package management (install, remove, update...)

- Patching

- Applying any basic salt state (including a formula)

- Salt remote commands

- Bonus point: Java part for product identification, and monitoring enablement

- Bonus point: sumaform enablement (https://github.com/uyuni-project/sumaform)

- Bonus point: Documentation (https://github.com/uyuni-project/uyuni-docs)

- Bonus point: testsuite enablement (https://github.com/uyuni-project/uyuni/tree/master/testsuite)

If something is breaking: we can try to fix it, but the main idea is research how supported it is right now. Beyond that it's up to each project member how much to hack :-)

- If you don't have knowledge about some of the steps: ask the team

- If you still don't know what to do: switch to another distribution and keep testing.

This card is for EVERYONE, not just developers. Seriously! We had people from other teams helping that were not developers, and added support for Debian and new SUSE Linux Enterprise and openSUSE Leap versions :-)

In progress/done for Hack Week 25

Guide

We started writin a Guide: Adding a new client GNU Linux distribution to Uyuni at https://github.com/uyuni-project/uyuni/wiki/Guide:-Adding-a-new-client-GNU-Linux-distribution-to-Uyuni, to make things easier for everyone, specially those not too familiar wht Uyuni or not technical.

openSUSE Leap 16.0

The distribution will all love!

https://en.opensuse.org/openSUSE:Roadmap#DRAFTScheduleforLeap16.0

Curent Status We started last year, it's complete now for Hack Week 25! :-D

[W]Reposync (this will require using spacewalk-common-channels and adding channels to the .ini file) NOTE: Done, client tools for SLMicro6 are using as those for SLE16.0/openSUSE Leap 16.0 are not available yet[W]Onboarding (salt minion from UI, salt minion from bootstrap scritp, and salt-ssh minion) (this will probably require adding OS to the bootstrap repository creator)[W]Package management (install, remove, update...). Works, even reboot requirement detection

openQA tests needles elaboration using AI image recognition by mdati

Description

In the openQA test framework, to identify the status of a target SUT image, a screenshots of GUI or CLI-terminal images,

the needles framework scans the many pictures in its repository, having associated a given set of tags (strings), selecting specific smaller parts of each available image. For the needles management actually we need to keep stored many screenshots, variants of GUI and CLI-terminal images, eachone accompanied by a dedicated set of data references (json).

A smarter framework, using image recognition based on AI or other image elaborations tools, nowadays widely available, could improve the matching process and hopefully reduce time and errors, during the images verification and detection process.

Goals

Main scope of this idea is to match a "graphical" image of the console or GUI status of a running openQA test, an image of a shell console or application-GUI screenshot, using less time and resources and with less errors in data preparation and use, than the actual openQA needles framework; that is:

- having a given SUT (system under test) GUI or CLI-terminal screenshot, with a local distribution of pixels or text commands related to a running test status,

- we want to identify a desired target, e.g. a screen image status or data/commands context,

- based on AI/ML-pretrained archives containing object or other proper elaboration tools,

- possibly able to identify also object not present in the archive, i.e. by means of AI/ML mechanisms.

- the matching result should be then adapted to continue working in the openQA test, likewise and in place of the same result that would have been produced by the original openQA needles framework.

- We expect an improvement of the matching-time(less time), reliability of the expected result(less error) and simplification of archive maintenance in adding/removing objects(smaller DB and less actions).

Hackweek POC:

Main steps

- Phase 1 - Plan

- study the available tools

- prepare a plan for the process to build

- Phase 2 - Implement

- write and build a draft application

- Phase 3 - Data

- prepare the data archive from a subset of needles

- initialize/pre-train the base archive

- select a screenshot from the subset, removing/changing some part

- Phase 4 - Test

- run the POC application

- expect the image type is identified in a good %.

Resources

First step of this project is quite identification of useful resources for the scope; some possibilities are:

- SUSE AI and other ML tools (i.e. Tensorflow)

- Tools able to manage images

- RPA test tools (like i.e. Robot framework)

- other.

Project references

- Repository: openqa-needles-AI-driven

Multimachine on-prem test with opentofu, ansible and Robot Framework by apappas

Description

A long time ago I explored using the Robot Framework for testing. A big deficiency over our openQA setup is that bringing up and configuring the connection to a test machine is out of scope.

Nowadays we have a way¹ to deploy SUTs outside openqa, but we only use if for cloud tests in conjuction with openqa. Using knowledge gained from that project I am going to try to create a test scenario that replicates an openqa test but this time including the deployment and setup of the SUT.

Goals

Create a simple multimachine test scenario with the support server and SUT all created by the robot framework.

Resources

- https://github.com/SUSE/qe-sap-deployment

- terraform-libvirt-provider