Project description

The project Verifree is about GPG key server. The goal is build a Key server, where users are able to verify the GPG keys. Admins should be also delete non valid keys. Verifree servers will be an internal servers only and NOT connect into the public GPG infrastructure.

The reason for the "private" GPG key serves is, that at the moment, if we want to used GPG key we trust external GPG key servers and we have no control for data there.

GPG keys on the server will be only for employees and we as admins will be able to control them.

We should have more then one server in the infrastructure and regularly sync data.

The long term goal is made this as a security add-on/application and be able offer it to SUSE customers to run their own GPG key serves

Goal for this Hackweek

Build basic application running on SLE/openSUSE OS. * be able to install it via an RPM * configure the app and have basic functions there. * have a salted it to be able run other servers on demand * setup basic work flow for the key management and verification

Resources

- as inspiration I found this: https://keys.openpgp.org/about

- as software I would like to try GNU GPL project: https://gitlab.com/hagrid-keyserver/hagrid

Any help from others is welcome.

Looking for hackers with the skills:

This project is part of:

Hack Week 21

Activity

Comments

-

over 3 years ago by crameleon | Reply

It would be cool if it still had an option to verify keys or signatures against a public, external, GPG server to catch irregularities - the benefit of the federated key servers on the internet is that if one gets compromised, one could get suspicious if they find a different key for a person on a different keyserver. Since we are only going to run one single keyserver internally, if the infrastructure gets compromised, there is no other party to ask for trust.

-

over 3 years ago by jzerebecki | Reply

There are dumps available, at least one of these sources seems to be current: https://github.com/SKS-Keyserver/sks-keyserver/wiki/KeydumpSources

-

over 3 years ago by mkoutny | Reply

Ideally, GPG keyserver should not be the source of trust. You have web of trust between individual keys and use the keyserver as an unreliable medium. You verify the keys locally with (sub)set of keys and you don't care if the server is or isn't compromised. (You only may be affected by its unavailability.)

Also, in my understanding, the internal keyserver is not supposed to serve to distribute any key but just those associated to SUSE accounts.

-

over 3 years ago by jzerebecki | Reply

Yes, GPG/OpenPGP currently has no mechanism to ensure one is up to date on revocations. So it is vulnerable against keyservers being made to not serve some revocations.

In theory it is possible to fix this, but I know of no practical implementation.

Even if you do not solve this, it is a good idea to ingest the updates from the keyserver gossip. If you do not you refuse information you can verify, which is even worse.

-

-

-

over 3 years ago by jzerebecki | Reply

Note that hagrid is both not GDPR compliant and removes important information (e.g. signatures and revocations used for Web of Trust) in a failed attempt at GDPR compliance: https://gitlab.com/hagrid-keyserver/hagrid/-/issues/151

I can think of two right now working ways for exchanging keys to avoid problems with GDPR: 1) Exchange keys by exporting, encrypting, ASCII armoring it and then attach it to a mail; exchange certification requests and confirmations by saving it as a text file, signing, encrypting, ASCII armoring it and then attach it to a mail. This reduce many things people stumbled over that were common even before the dos vulnerability of keyservers were more widely known. 2) Maintain the keys involved in a project in a git repository. Have the git repo contain a privacy policy that explains the consequences. For submitting their key people create a commit that is singed by the same key that it adds in ASCII armored form. This means the privacy policy is in the history of the commit they signed, so they could have read it. When someone ask for their info to be deleted, delete the repo and start a new history without this key. This has the advantage that everyone who already had a copy of the repo, notices on the next git pull and can easily understand which key was deleted. Without this advantage you could get into the situation where you never receive updates like revocation for a key without knowing it. (kernel.org uses a similar repo explained at https://lore.kernel.org/lkml/20190830143027.cffqda2vzggrtiko@chatter.i7.local/ .) You still need to use (1) for requesting and confirming certifications.

One could use a CI to make additions and updates to a git repo like in (2) self-service. Thus you could create a pull request and once CI passes it gets automatically merged. This CI job would allow adding a key that is trusted by existing ones with commit signature by the added key, for anything else require commit signature is valid and from a key already in this repo, allow updating when import old in new key doesn't add anything, allow editing docs, check generated data matches if touched, deny anything else.

The gpg reimplementation that hagrid uses as a library is https://docs.sequoia-pgp.org/sequoia_openpgp/ which is recommended.

Similar Projects

AI-Powered Unit Test Automation for Agama by joseivanlopez

The Agama project is a multi-language Linux installer that leverages the distinct strengths of several key technologies:

- Rust: Used for the back-end services and the core HTTP API, providing performance and safety.

- TypeScript (React/PatternFly): Powers the modern web user interface (UI), ensuring a consistent and responsive user experience.

- Ruby: Integrates existing, robust YaST libraries (e.g.,

yast-storage-ng) to reuse established functionality.

The Problem: Testing Overhead

Developing and maintaining code across these three languages requires a significant, tedious effort in writing, reviewing, and updating unit tests for each component. This high cost of testing is a drain on developer resources and can slow down the project's evolution.

The Solution: AI-Driven Automation

This project aims to eliminate the manual overhead of unit testing by exploring and integrating AI-driven code generation tools. We will investigate how AI can:

- Automatically generate new unit tests as code is developed.

- Intelligently correct and update existing unit tests when the application code changes.

By automating this crucial but monotonous task, we can free developers to focus on feature implementation and significantly improve the speed and maintainability of the Agama codebase.

Goals

- Proof of Concept: Successfully integrate and demonstrate an authorized AI tool (e.g.,

gemini-cli) to automatically generate unit tests. - Workflow Integration: Define and document a new unit test automation workflow that seamlessly integrates the selected AI tool into the existing Agama development pipeline.

- Knowledge Sharing: Establish a set of best practices for using AI in code generation, sharing the learned expertise with the broader team.

Contribution & Resources

We are seeking contributors interested in AI-powered development and improving developer efficiency. Whether you have previous experience with code generation tools or are eager to learn, your participation is highly valuable.

If you want to dive deep into AI for software quality, please reach out and join the effort!

- Authorized AI Tools: Tools supported by SUSE (e.g.,

gemini-cli) - Focus Areas: Rust, TypeScript, and Ruby components within the Agama project.

Interesting Links

Learn a bit of embedded programming with Rust in a micro:bit v2 by aplanas

Description

micro:bit is a small single board computer with a ARM Cortex-M4 with the FPU extension, with a very constrain amount of memory and a bunch of sensors and leds.

The board is very well documented, with schematics and code for all the features available, so is an excellent platform for learning embedded programming.

Rust is a system programming language that can generate ARM code, and has crates (libraries) to access the micro:bit hardware. There is plenty documentation about how to make small programs that will run in the micro:bit.

Goals

Start learning about embedded programming in Rust, and maybe make some code to the small KS4036F Robot car from keyestudio.

Resources

- micro:bit

- KS4036F

- microbit technical documentation

- schematic

- impl Rust for micro:bit

- Rust Embedded MB2 Discovery Book

- nRF-HAL

- nRF Microbit-v2 BSP (blocking)

- knurling-rs

- C++ microbit codal

- microbit-bsp for Embassy

- Embassy

Diary

Day 1

- Start reading https://mb2.implrust.com/abstraction-layers.html

- Prepare the dev environment (cross compiler, probe-rs)

- Flash first code in the board (blinky led)

- Checking differences between BSP and HAL

- Compile and install a more complex example, with stack protection

- Reading about the simplicity of xtask, as alias for workspace execution

- Reading the CPP code of the official micro:bit libraries. They have a font!

Day 2

- There are multiple BSP for the microbit. One is using async code for non-blocking operations

- Download and study a bit the API for microbit-v2, the nRF official crate

- Take a look of the KS4036F programming, seems that the communication is multiplexed via I2C

- The motor speed can be selected via PWM (pulse with modulation): power it longer (high frequency), and it will increase the speed

- Scrolling some text

- Debug by printing! defmt is a crate that can be used with probe-rs to emit logs

- Start reading input from the board: buttons

- The logo can be touched and detected as a floating point value

Day 3

- A bit confused how to read the float value from a pin

OpenPlatform Self-Service Portal by tmuntan1

Description

In SUSE IT, we developed an internal developer platform for our engineers using SUSE technologies such as RKE2, SUSE Virtualization, and Rancher. While it works well for our existing users, the onboarding process could be better.

To improve our customer experience, I would like to build a self-service portal to make it easy for people to accomplish common actions. To get started, I would have the portal create Jira SD tickets for our customers to have better information in our tickets, but eventually I want to add automation to reduce our workload.

Goals

- Build a frontend website (Angular) that helps customers create Jira SD tickets.

- Build a backend (Rust with Axum) for the backend, which would do all the hard work for the frontend.

Resources (SUSE VPN only)

- development site: https://ui-dev.openplatform.suse.com/login?returnUrl=%2Fopenplatform%2Fforms

- https://gitlab.suse.de/itpe/core/open-platform/op-portal/backend

- https://gitlab.suse.de/itpe/core/open-platform/op-portal/frontend

Mail client with mailing list workflow support in Rust by acervesato

Description

To create a mail user interface using Rust programming language, supporting mailing list patches workflow. I know, aerc is already there, but I would like to create something simpler, without integrated protocols. Just a plain user interface that is using some crates to read and create emails which are fetched and sent via external tools.

I already know Rust, but not the async support, which is needed in this case in order to handle events inside the mail folder and to send notifications.

Goals

- simple user interface in the style of

aerc, with some vim keybindings for motions and search - automatic run of external tools (like

mbsync) for checking emails - automatic run commands for notifications

- apply patch set from ML

- tree-sitter support with styles

Resources

- ratatui: user interface (https://ratatui.rs/)

- notify: folder watcher (https://docs.rs/notify/latest/notify/)

- mail-parser: parser for emails (https://crates.io/crates/mail-parser)

- mail-builder: create emails in proper format (https://docs.rs/mail-builder/latest/mail_builder/)

- gitpatch: ML support (https://crates.io/crates/gitpatch)

- tree-sitter-rust: support for mail format (https://crates.io/crates/tree-sitter)

RMT.rs: High-Performance Registration Path for RMT using Rust by gbasso

Description

The SUSE Repository Mirroring Tool (RMT) is a critical component for managing software updates and subscriptions, especially for our Public Cloud Team (PCT). In a cloud environment, hundreds or even thousands of new SUSE instances (VPS/EC2) can be provisioned simultaneously. Each new instance attempts to register against an RMT server, creating a "thundering herd" scenario.

We have observed that the current RMT server, written in Ruby, faces performance issues under this high-concurrency registration load. This can lead to request overhead, slow registration times, and outright registration failures, delaying the readiness of new cloud instances.

This Hackweek project aims to explore a solution by re-implementing the performance-critical registration path in Rust. The goal is to leverage Rust's high performance, memory safety, and first-class concurrency handling to create an alternative registration endpoint that is fast, reliable, and can gracefully manage massive, simultaneous request spikes.

The new Rust module will be integrated into the existing RMT Ruby application, allowing us to directly compare the performance of both implementations.

Goals

The primary objective is to build and benchmark a high-performance Rust-based alternative for the RMT server registration endpoint.

Key goals for the week:

- Analyze & Identify: Dive into the

SUSE/rmtRuby codebase to identify and map out the exact critical path for server registration (e.g., controllers, services, database interactions). - Develop in Rust: Implement a functionally equivalent version of this registration logic in Rust.

- Integrate: Explore and implement a method for Ruby/Rust integration to "hot-wire" the new Rust module into the RMT application. This may involve using FFI, or libraries like

rb-sysormagnus. - Benchmark: Create a benchmarking script (e.g., using

k6,ab, or a custom tool) that simulates the high-concurrency registration load from thousands of clients. - Compare & Present: Conduct a comparative performance analysis (requests per second, latency, success/error rates, CPU/memory usage) between the original Ruby path and the new Rust path. The deliverable will be this data and a summary of the findings.

Resources

- RMT Source Code (Ruby):

https://github.com/SUSE/rmt

- RMT Documentation:

https://documentation.suse.com/sles/15-SP7/html/SLES-all/book-rmt.html

- Tooling & Stacks:

- RMT/Ruby development environment (for running the base RMT)

- Rust development environment (

rustup,cargo)

- Potential Integration Libraries:

- rb-sys:

https://github.com/oxidize-rb/rb-sys - Magnus:

https://github.com/matsadler/magnus

- rb-sys:

- Benchmarking Tools:

k6(https://k6.io/)ab(ApacheBench)

OSHW USB token for Passkeys (FIDO2, U2F, WebAuthn) and PGP by duwe

Description

The idea to carry your precious key material along in a specially secured hardware item is almost as old as public keys themselves, starting with the OpenPGP card. Nowadays, an USB plug or NFC are the hardware interfaces of choice, and password-less log-ins are fortunately becoming more popular and standardised.

Meanwhile there are a few products available in that field, for example

yubikey - the "market leader", who continues to sell off buggy, allegedly unfixable firmware ROMs from old stock. Needless to say, it's all but open source, so assume backdoors.

nitrokey - the "start" variant is open source, but the hardware was found to leak its flash ROM content via the SWD debugging interface (even when the flash is read protected !) Compute power is barely enough for Curve25519, Flash memory leaves room for only 3 keys.

solokey(2) - quite neat hardware, with a secure enclave called "TrustZone-M". Unfortunately, the OSS firmware development is stuck in a rusty dead end and cannot use it. Besides, NXP's support for open source toolchains for its devboards is extremely limited.

I plan to base this project on the not-so-tiny USB stack, which is extremely easy to retarget, and to rewrite / refactor the crypto protocols to use the keys only via handles, so the actual key material can be stored securely. Best OSS support seems to be for STM32-based products.

Goals

Create a proof-of-concept item that can provide a second factor for logins and/or decrypt a PGP mail with your private key without disclosing the key itself. Implement or at least show a migration path to store the private key in a location with elevated hardware security.

Resources

STM32 Nucleo, blackmagic probe, tropicsquare tropic01, arm-none cross toolchain

vex8s-controller: a kubernetes controller to automatically generate VEX documents of your running workloads by agreggi

Description

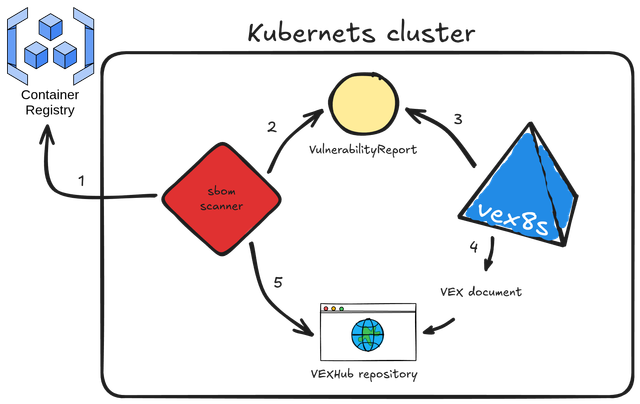

vex8s-controller is an add-on for SBOMscanner project.

Its purpose is to automatically generate VEX documents based on the workloads running in a kubernetes cluster. It integrates directly with SBOMscanner by monitoring VulnerabilityReports created for container images and producing corresponding VEX documents that reflect each workload’s SecurityContext.

Here's the workflow explained:

- sbomscanner scans for images in registry

- generates a

VulnerabilityReportwith the image CVEs - vex8s-controller triggers when a workload is scheduled on the cluster and generates a VEX document based on the workload

SecurityContextconfiguration - the VEX document is provided by vex8s-controller using a VEX Hub repository

- sbomscanner configure the VEXHub CRD to point to the internal vex8s-controller VEX Hub repository

Goals

The objective is to build a kubernetes controller that uses the vex8s mitigation rules engine to generate VEX documents and serve them through an internal VEX Hub repository within the cluster.

SBOMscanner can then be configured to consume VEX data directly from this in-cluster repository managed by vex8s-controller.

Resources

Summary

The project ended up with this PoC on GitHub: vex8s-controller.

The controller works fine, but needs work to make it more stable. Instructions to reproduce a demo locally are reported in the repository.

Help Create A Chat Control Resistant Turnkey Chatmail/Deltachat Relay Stack - Rootless Podman Compose, OpenSUSE BCI, Hardened, & SELinux by 3nd5h1771fy

Description

The Mission: Decentralized & Sovereign Messaging

FYI: If you have never heard of "Chatmail", you can visit their site here, but simply put it can be thought of as the underlying protocol/platform decentralized messengers like DeltaChat use for their communications. Do not confuse it with the honeypot looking non-opensource paid for prodect with better seo that directs you to chatmailsecure(dot)com

In an era of increasing centralized surveillance by unaccountable bad actors (aka BigTech), "Chat Control," and the erosion of digital privacy, the need for sovereign communication infrastructure is critical. Chatmail is a pioneering initiative that bridges the gap between classic email and modern instant messaging, offering metadata-minimized, end-to-end encrypted (E2EE) communication that is interoperable and open.

However, unless you are a seasoned sysadmin, the current recommended deployment method of a Chatmail relay is rigid, fragile, difficult to properly secure, and effectively takes over the entire host the "relay" is deployed on.

Why This Matters

A simple, host agnostic, reproducible deployment lowers the entry cost for anyone wanting to run a privacy‑preserving, decentralized messaging relay. In an era of perpetually resurrected chat‑control legislation threats, EU digital‑sovereignty drives, and many dangers of using big‑tech messaging platforms (Apple iMessage, WhatsApp, FB Messenger, Instagram, SMS, Google Messages, etc...) for any type of communication, providing an easy‑to‑use alternative empowers:

- Censorship resistance - No single entity controls the relay; operators can spin up new nodes quickly.

- Surveillance mitigation - End‑to‑end OpenPGP encryption ensures relay operators never see plaintext.

- Digital sovereignty - Communities can host their own infrastructure under local jurisdiction, aligning with national data‑policy goals.

By turning the Chatmail relay into a plug‑and‑play container stack, we enable broader adoption, foster a resilient messaging fabric, and give developers, activists, and hobbyists a concrete tool to defend privacy online.

Goals

As I indicated earlier, this project aims to drastically simplify the deployment of Chatmail relay. By converting this architecture into a portable, containerized stack using Podman and OpenSUSE base container images, we can allow anyone to deploy their own censorship-resistant, privacy-preserving communications node in minutes.

Our goal for Hack Week: package every component into containers built on openSUSE/MicroOS base images, initially orchestrated with a single container-compose.yml (podman-compose compatible). The stack will:

- Run on any host that supports Podman (including optimizations and enhancements for SELinux‑enabled systems).

- Allow network decoupling by refactoring configurations to move from file-system constrained Unix sockets to internal TCP networking, allowing containers achieve stricter isolation.

- Utilize Enhanced Security with SELinux by using purpose built utilities such as udica we can quickly generate custom SELinux policies for the container stack, ensuring strict confinement superior to standard/typical Docker deployments.

- Allow the use of bind or remote mounted volumes for shared data (

/var/vmail, DKIM keys, TLS certs, etc.). - Replace the local DNS server requirement with a remote DNS‑provider API for DKIM/TXT record publishing.

By delivering a turnkey, host agnostic, reproducible deployment, we lower the barrier for individuals and small communities to launch their own chatmail relays, fostering a decentralized, censorship‑resistant messaging ecosystem that can serve DeltaChat users and/or future services adopting this protocol

Resources

- The links included above

- https://chatmail.at/doc/relay/

- https://delta.chat/en/help

- Project repo -> https://codeberg.org/EndShittification/containerized-chatmail-relay

Exploring Rust's potential: from basics to security by sferracci

Description

This project aims to conduct a focused investigation and practical application of the Rust programming language, with a specific emphasis on its security model. A key component will be identifying and understanding the most common vulnerabilities that can be found in Rust code.

Goals

Achieve a beginner/intermediate level of proficiency in writing Rust code. This will be measured by trying to solve LeetCode problems focusing on common data structures and algorithms. Study Rust vulnerabilities and learning best practices to avoid them.

Resources

Rust book: https://doc.rust-lang.org/book/

Looking at Rust if it could be an interesting programming language by jsmeix

Get some basic understanding of Rust security related features from a general point of view.

This Hack Week project is not to learn Rust to become a Rust programmer. This might happen later but it is not the goal of this Hack Week project.

The goal of this Hack Week project is to evaluate if Rust could be an interesting programming language.

An interesting programming language must make it easier to write code that is correct and stays correct when over time others maintain and enhance it than the opposite.