Description

Backstage (backstage.io) is an open-source, CNCF project that allows you to create your own developer portal. There are many plugins for Backstage.

This could be a great compliment to Rancher Manager.

Goals

Learn and experiment with Backstage and look at how this could be integrated with Rancher Manager. Goal is to have some kind of integration completed in this Hack week.

Progress

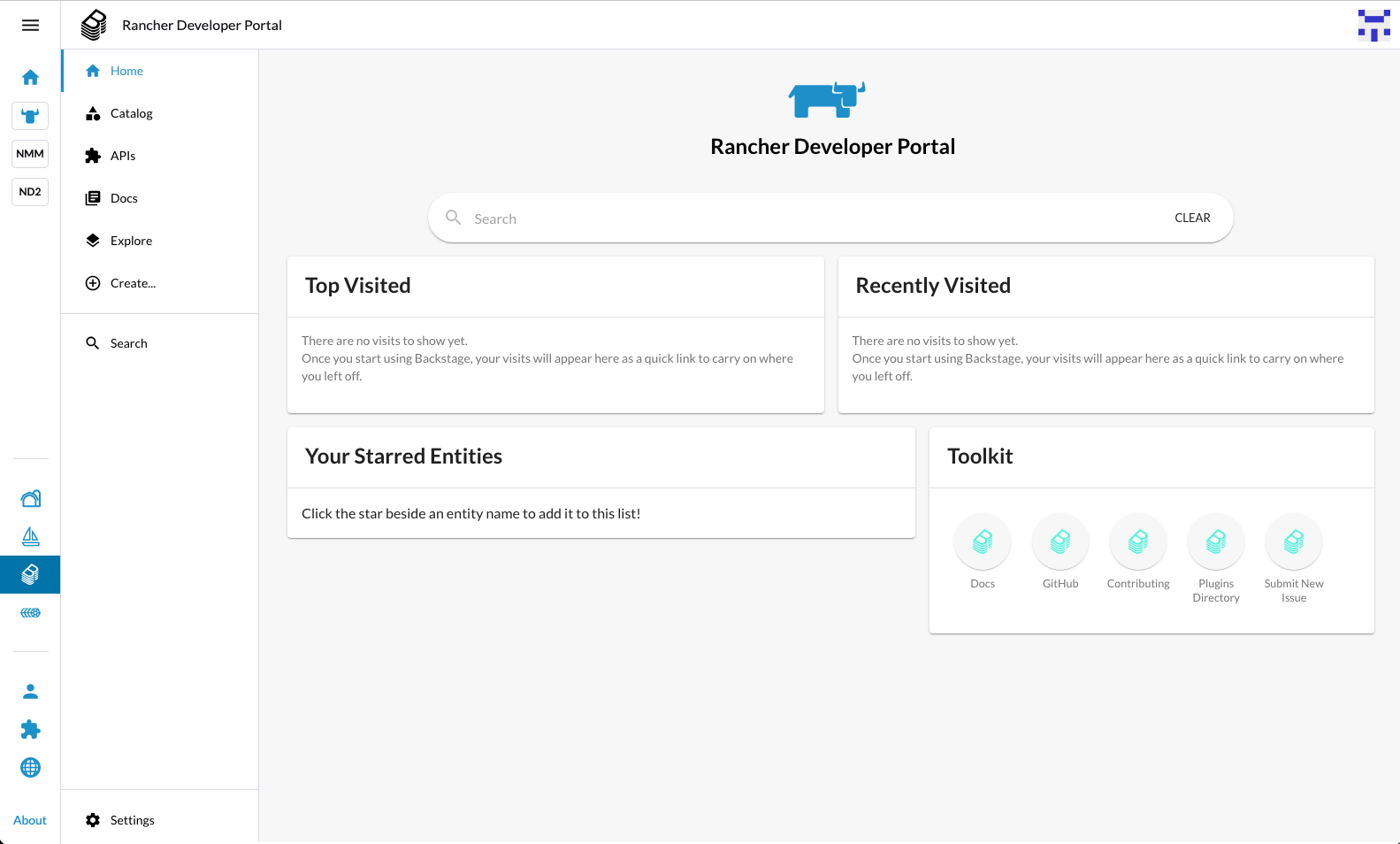

Screen shot of home page at the end of Hackweek:

Day One

- Got Backstage running locally, understanding configuration with HTTPs.

- Got Backstage embedded in an IFRAME inside of Rancher

- Added content into the software catalog (see: https://backstage.io/docs/features/techdocs/getting-started/)

- Understood more about the entity model

Day Two

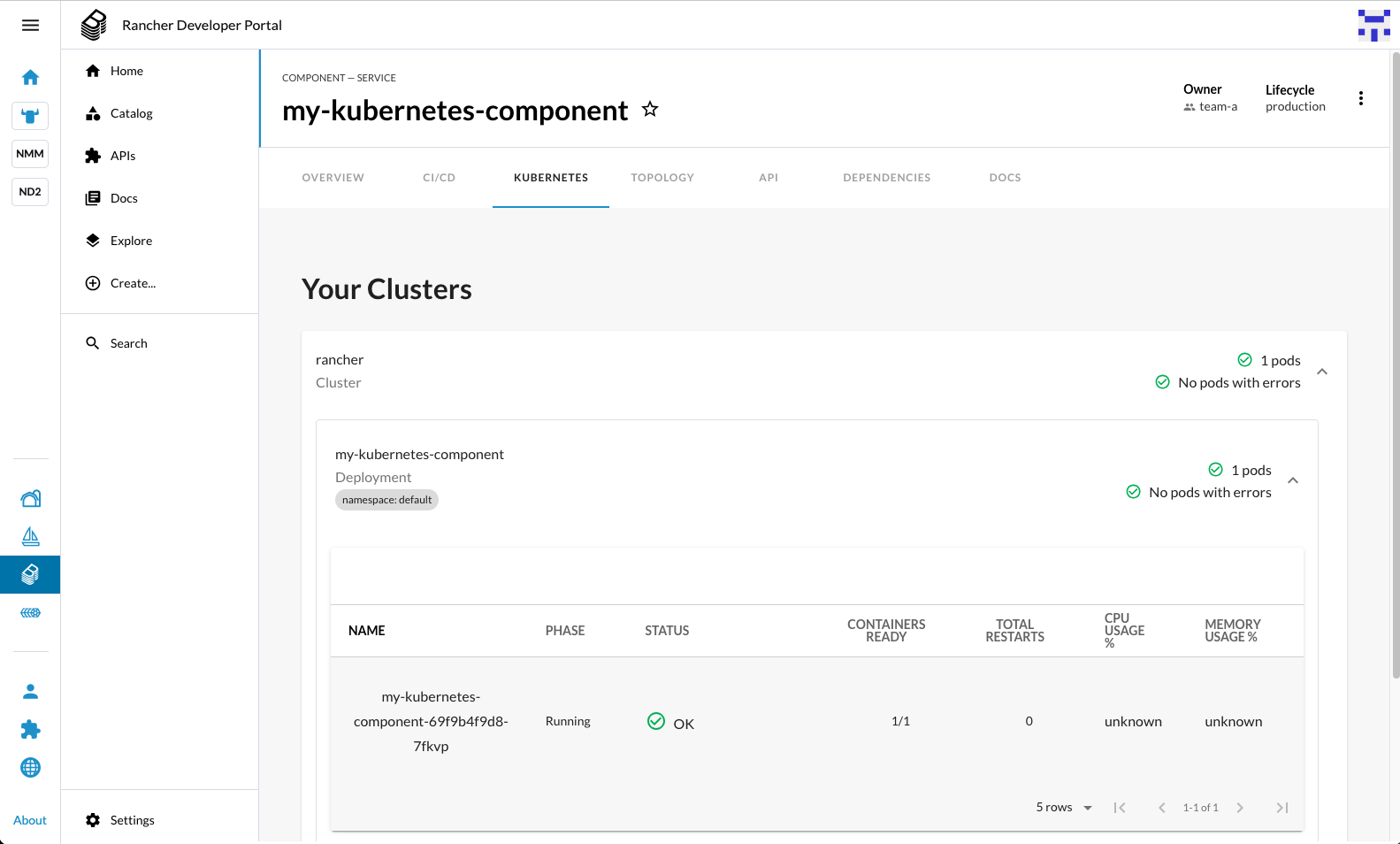

- Connected Backstage to the Rancher local cluster and configured the Kubernetes plugin.

- Created Rancher theme to make the light theme more consistent with Rancher

Days Three and Day Four

Created two backend plugins for Backstage:

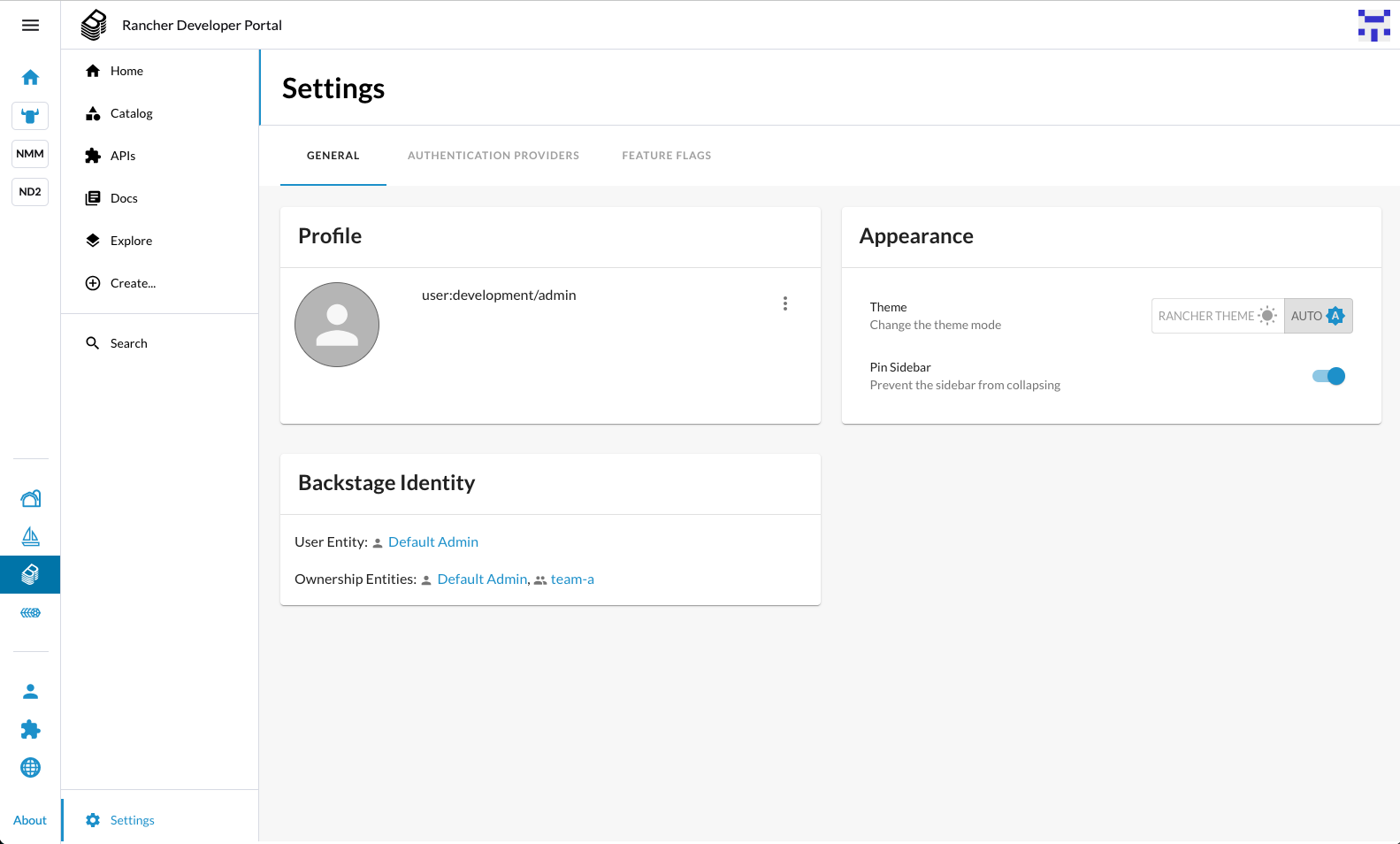

- Catalog Entity Provider - this imports users from Rancher into Backstage

- Auth Provider - uses the proxied sign-in pattern to check the Rancher session cookie, to user that to authenticate the user with Rancher and then log them into Backstage by connecting this to the imported User entity from the catalog entity provider plugin.

With this in place, you can single-sign-on between Rancher and Backstage when it is deployed within Rancher. Note this is only when running locally for development at present

Day Five

- Start to build out a production deployment for all of the above

- Made some progress, but hit issues with the authentication and proxying when running proxied within Rancher, which needs further investigation

Looking for hackers with the skills:

This project is part of:

Hack Week 24

Activity

Comments

Be the first to comment!

Similar Projects

Liz - Prompt autocomplete by ftorchia

Description

Liz is the Rancher AI assistant for cluster operations.

Goals

We want to help users when sending new messages to Liz, by adding an autocomplete feature to complete their requests based on the context.

Example:

- User prompt: "Can you show me the list of p"

- Autocomplete suggestion: "Can you show me the list of p...od in local cluster?"

Example:

- User prompt: "Show me the logs of #rancher-"

- Chat console: It shows a drop-down widget, next to the # character, with the list of available pod names starting with "rancher-".

Technical Overview

- The AI agent should expose a new ws/autocomplete endpoint to proxy autocomplete messages to the LLM.

- The UI extension should be able to display prompt suggestions and allow users to apply the autocomplete to the Prompt via keyboard shortcuts.

Resources

Cluster API Provider for Harvester by rcase

Project Description

The Cluster API "infrastructure provider" for Harvester, also named CAPHV, makes it possible to use Harvester with Cluster API. This enables people and organisations to create Kubernetes clusters running on VMs created by Harvester using a declarative spec.

The project has been bootstrapped in HackWeek 23, and its code is available here.

Work done in HackWeek 2023

- Have a early working version of the provider available on Rancher Sandbox : *DONE *

- Demonstrated the created cluster can be imported using Rancher Turtles: DONE

- Stretch goal - demonstrate using the new provider with CAPRKE2: DONE and the templates are available on the repo

DONE in HackWeek 24:

- Add more Unit Tests

- Improve Status Conditions for some phases

- Add cloud provider config generation

- Testing with Harvester v1.3.2

- Template improvements

- Issues creation

DONE in 2025 (out of Hackweek)

- Support of ClusterClass

- Add to

clusterctlcommunity providers, you can add it directly withclusterctl - Testing on newer versions of Harvester v1.4.X and v1.5.X

- Support for

clusterctl generate cluster ... - Improve Status Conditions to reflect current state of Infrastructure

- Improve CI (some bugs for release creation)

Goals for HackWeek 2025

- FIRST and FOREMOST, any topic is important to you

- Add e2e testing

- Certify the provider for Rancher Turtles

- Add Machine pool labeling

- Add PCI-e passthrough capabilities.

- Other improvement suggestions are welcome!

Thanks to @isim and Dominic Giebert for their contributions!

Resources

Looking for help from anyone interested in Cluster API (CAPI) or who wants to learn more about Harvester.

This will be an infrastructure provider for Cluster API. Some background reading for the CAPI aspect:

The Agentic Rancher Experiment: Do Androids Dream of Electric Cattle? by moio

Rancher is a beast of a codebase. Let's investigate if the new 2025 generation of GitHub Autonomous Coding Agents and Copilot Workspaces can actually tame it.

The Plan

Create a sandbox GitHub Organization, clone in key Rancher repositories, and let the AI loose to see if it can handle real-world enterprise OSS maintenance - or if it just hallucinates new breeds of Kubernetes resources!

Specifically, throw "Agentic Coders" some typical tasks in a complex, long-lived open-source project, such as:

❥ The Grunt Work: generate missing GoDocs, unit tests, and refactorings. Rebase PRs.

❥ The Complex Stuff: fix actual (historical) bugs and feature requests to see if they can traverse the complexity without (too much) human hand-holding.

❥ Hunting Down Gaps: find areas lacking in docs, areas of improvement in code, dependency bumps, and so on.

If time allows, also experiment with Model Context Protocol (MCP) to give agents context on our specific build pipelines and CI/CD logs.

Why?

We know AI can write "Hello World." and also moderately complex programs from a green field. But can it rebase a 3-month-old PR with conflicts in rancher/rancher? I want to find the breaking point of current AI agents to determine if and how they can help us to reduce our technical debt, work faster and better. At the same time, find out about pitfalls and shortcomings.

The CONCLUSION!!!

A ![]() State of the Union

State of the Union ![]() document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below!

document was compiled to summarize lessons learned this week. For more gory details, just read on the diary below! ![]()

Rancher/k8s Trouble-Maker by tonyhansen

Project Description

When studying for my RHCSA, I found trouble-maker, which is a program that breaks a Linux OS and requires you to fix it. I want to create something similar for Rancher/k8s that can allow for troubleshooting an unknown environment.

Goals for Hackweek 25

- Update to modern Rancher and verify that existing tests still work

- Change testing logic to populate secrets instead of requiring a secondary script

- Add new tests

Goals for Hackweek 24 (Complete)

- Create a basic framework for creating Rancher/k8s cluster lab environments as needed for the Break/Fix

- Create at least 5 modules that can be applied to the cluster and require troubleshooting

Resources

- https://github.com/celidon/rancher-troublemaker

- https://github.com/rancher/terraform-provider-rancher2

- https://github.com/rancher/tf-rancher-up

- https://github.com/rancher/quickstart

SUSE Virtualization (Harvester): VM Import UI flow by wombelix

Description

SUSE Virtualization (Harvester) has a vm-import-controller that allows migrating VMs from VMware and OpenStack, but users need to write manifest files and apply them with kubectl to use it. This project is about adding the missing UI pieces to the harvester-ui-extension, making VM Imports accessible without requiring Kubernetes and YAML knowledge.

VMware and OpenStack admins aren't automatically familiar with Kubernetes and YAML. Implementing the UI part for the VM Import feature makes it easier to use and more accessible. The Harvester Enhancement Proposal (HEP) VM Migration controller included a UI flow implementation in its scope. Issue #2274 received multiple comments that an UI integration would be a nice addition, and issue #4663 was created to request the implementation but eventually stalled.

Right now users need to manually create either VmwareSource or OpenstackSource resources, then write VirtualMachineImport manifests with network mappings and all the other configuration options. Users should be able to do that and track import status through the UI without writing YAML.

Work during the Hack Week will be done in this fork in a branch called suse-hack-week-25, making progress publicly visible and open for contributions. When everything works out and the branch is in good shape, it will be submitted as a pull request to harvester-ui-extension to get it included in the next Harvester release.

Testing will focus on VMware since that's what is available in the lab environment (SUSE Virtualization 1.6 single-node cluster, ESXi 8.0 standalone host). Given that this is about UI and surfacing what the vm-import-controller handles, the implementation should work for OpenStack imports as well.

This project is also a personal challenge to learn vue.js and get familiar with Rancher Extensions development, since harvester-ui-extension is built on that framework.

Goals

- Learn Vue.js and Rancher Extensions fundamentals required to finish the project

- Read and learn from other Rancher UI Extensions code, especially understanding the

harvester-ui-extensioncode base - Understand what the

vm-import-controllerand its CRDs require, identify ready to use components in the Rancher UI Extension API that can be leveraged - Implement UI logic for creating and managing

VmwareSource/OpenstackSourceandVirtualMachineImportresources with all relevant configuration options and credentials - Implemnt UI elements to display

VirtualMachineImportstatus and errors

Resources

HEP and related discussion

- https://github.com/harvester/harvester/blob/master/enhancements/20220726-vm-migration.md

- https://github.com/harvester/harvester/issues/2274

- https://github.com/harvester/harvester/issues/4663

SUSE Virtualization VM Import Documentation

Rancher Extensions Documentation

Rancher UI Plugin Examples

Vue Router Essentials

Vue Router API

Vuex Documentation

Self-Scaling LLM Infrastructure Powered by Rancher by ademicev0

Self-Scaling LLM Infrastructure Powered by Rancher

Description

The Problem

Running LLMs can get expensive and complex pretty quickly.

Today there are typically two choices:

- Use cloud APIs like OpenAI or Anthropic. Easy to start with, but costs add up at scale.

- Self-host everything - set up Kubernetes, figure out GPU scheduling, handle scaling, manage model serving... it's a lot of work.

What if there was a middle ground?

What if infrastructure scaled itself instead of making you scale it?

Can we use existing Rancher capabilities like CAPI, autoscaling, and GitOps to make this simpler instead of building everything from scratch?

Project Repository: github.com/alexander-demicev/llmserverless

What This Project Does

A key feature is hybrid deployment: requests can be routed based on complexity or privacy needs. Simple or low-sensitivity queries can use public APIs (like OpenAI), while complex or private requests are handled in-house on local infrastructure. This flexibility allows balancing cost, privacy, and performance - using cloud for routine tasks and on-premises resources for sensitive or demanding workloads.

A complete, self-scaling LLM infrastructure that:

- Scales to zero when idle (no idle costs)

- Scales up automatically when requests come in

- Adds more nodes when needed, removes them when demand drops

- Runs on any infrastructure - laptop, bare metal, or cloud

Think of it as "serverless for LLMs" - focus on building, the infrastructure handles itself.

How It Works

A combination of open source tools working together:

Flow:

- Users interact with OpenWebUI (chat interface)

- Requests go to LiteLLM Gateway

- LiteLLM routes requests to:

- Ollama (Knative) for local model inference (auto-scales pods)

- Or cloud APIs for fallback

Kubernetes-Based ML Lifecycle Automation by lmiranda

Description

This project aims to build a complete end-to-end Machine Learning pipeline running entirely on Kubernetes, using Go, and containerized ML components.

The pipeline will automate the lifecycle of a machine learning model, including:

- Data ingestion/collection

- Model training as a Kubernetes Job

- Model artifact storage in an S3-compatible registry (e.g. Minio)

- A Go-based deployment controller that automatically deploys new model versions to Kubernetes using Rancher

- A lightweight inference service that loads and serves the latest model

- Monitoring of model performance and service health through Prometheus/Grafana

The outcome is a working prototype of an MLOps workflow that demonstrates how AI workloads can be trained, versioned, deployed, and monitored using the Kubernetes ecosystem.

Goals

By the end of Hack Week, the project should:

Produce a fully functional ML pipeline running on Kubernetes with:

- Data collection job

- Training job container

- Storage and versioning of trained models

- Automated deployment of new model versions

- Model inference API service

- Basic monitoring dashboards

Showcase a Go-based deployment automation component, which scans the model registry and automatically generates & applies Kubernetes manifests for new model versions.

Enable continuous improvement by making the system modular and extensible (e.g., additional models, metrics, autoscaling, or drift detection can be added later).

Prepare a short demo explaining the end-to-end process and how new models flow through the system.

Resources

Updates

- Training pipeline and datasets

- Inference Service py

Rancher/k8s Trouble-Maker by tonyhansen

Project Description

When studying for my RHCSA, I found trouble-maker, which is a program that breaks a Linux OS and requires you to fix it. I want to create something similar for Rancher/k8s that can allow for troubleshooting an unknown environment.

Goals for Hackweek 25

- Update to modern Rancher and verify that existing tests still work

- Change testing logic to populate secrets instead of requiring a secondary script

- Add new tests

Goals for Hackweek 24 (Complete)

- Create a basic framework for creating Rancher/k8s cluster lab environments as needed for the Break/Fix

- Create at least 5 modules that can be applied to the cluster and require troubleshooting

Resources

- https://github.com/celidon/rancher-troublemaker

- https://github.com/rancher/terraform-provider-rancher2

- https://github.com/rancher/tf-rancher-up

- https://github.com/rancher/quickstart

Preparing KubeVirtBMC for project transfer to the KubeVirt organization by zchang

Description

KubeVirtBMC is preparing to transfer the project to the KubeVirt organization. One requirement is to enhance the modeling design's security. The current v1alpha1 API (the VirtualMachineBMC CRD) was designed during the proof-of-concept stage. It's immature and inherently insecure due to its cross-namespace object references, exposing security concerns from an RBAC perspective.

The other long-awaited feature is the ability to mount virtual media so that virtual machines can boot from remote ISO images.

Goals

- Deliver the v1beta1 API and its corresponding controller implementation

- Enable the Redfish virtual media mount function for KubeVirt virtual machines

Resources

- The KubeVirtBMC repo: https://github.com/starbops/kubevirtbmc

- The new v1beta1 API: https://github.com/starbops/kubevirtbmc/issues/83

- Redfish virtual media mount: https://github.com/starbops/kubevirtbmc/issues/44

Exploring Modern AI Trends and Kubernetes-Based AI Infrastructure by jluo

Description

Build a solid understanding of the current landscape of Artificial Intelligence and how modern cloud-native technologies—especially Kubernetes—support AI workloads.

Goals

Use Gemini Learning Mode to guide the exploration, surface relevant concepts, and structure the learning journey:

- Gain insight into the latest AI trends, tools, and architectural concepts.

- Understand how Kubernetes and related cloud-native technologies are used in the AI ecosystem (model training, deployment, orchestration, MLOps).

Resources

Red Hat AI Topic Articles

- https://www.redhat.com/en/topics/ai

Kubeflow Documentation

- https://www.kubeflow.org/docs/

Q4 2025 CNCF Technology Landscape Radar report:

- https://www.cncf.io/announcements/2025/11/11/cncf-and-slashdata-report-finds-leading-ai-tools-gaining-adoption-in-cloud-native-ecosystems/

- https://www.cncf.io/wp-content/uploads/2025/11/cncfreporttechradar_111025a.pdf

Agent-to-Agent (A2A) Protocol

- https://developers.googleblog.com/en/a2a-a-new-era-of-agent-interoperability/