Description

One part of Uyuni system management tool is ability to build custom images. Currently Uyuni supports only Kiwi image builder.

Kiwi however is not the only image building system out there and with the goal to also become familiar with other systems, this projects aim to add support for Edge Image builder and systemd's mkosi systems.

Goals

Uyuni is able to

- provision EIB and mkosi build hosts

- build EIB and mkosi images and store them

Resources

- Uyuni - https://github.com/uyuni-project/uyuni

- Edge Image builder - https://github.com/suse-edge/edge-image-builder

- mkosi - https://github.com/systemd/mkosi

Looking for hackers with the skills:

This project is part of:

Hack Week 24

Activity

Comments

-

about 1 year ago by oholecek | Reply

Progress during the Hackweek

- adapted service salt states for both EIB and mkosi and also updated original Kiwi (handling build host preparation)

- adapted build image salt state for mkosi and original Kiwi (for actual image building)

- adapted Java profile creation and editing to support EIB and mkosi

TODO next:

- adapt Java side to select correct build host variant

- post build image inspection for EIB and mkosi and image collection

Similar Projects

Testing and adding GNU/Linux distributions on Uyuni by juliogonzalezgil

Join the Gitter channel! https://gitter.im/uyuni-project/hackweek

Uyuni is a configuration and infrastructure management tool that saves you time and headaches when you have to manage and update tens, hundreds or even thousands of machines. It also manages configuration, can run audits, build image containers, monitor and much more!

Currently there are a few distributions that are completely untested on Uyuni or SUSE Manager (AFAIK) or just not tested since a long time, and could be interesting knowing how hard would be working with them and, if possible, fix whatever is broken.

For newcomers, the easiest distributions are those based on DEB or RPM packages. Distributions with other package formats are doable, but will require adapting the Python and Java code to be able to sync and analyze such packages (and if salt does not support those packages, it will need changes as well). So if you want a distribution with other packages, make sure you are comfortable handling such changes.

No developer experience? No worries! We had non-developers contributors in the past, and we are ready to help as long as you are willing to learn. If you don't want to code at all, you can also help us preparing the documentation after someone else has the initial code ready, or you could also help with testing :-)

The idea is testing Salt (including bootstrapping with bootstrap script) and Salt-ssh clients

To consider that a distribution has basic support, we should cover at least (points 3-6 are to be tested for both salt minions and salt ssh minions):

- Reposync (this will require using spacewalk-common-channels and adding channels to the .ini file)

- Onboarding (salt minion from UI, salt minion from bootstrap scritp, and salt-ssh minion) (this will probably require adding OS to the bootstrap repository creator)

- Package management (install, remove, update...)

- Patching

- Applying any basic salt state (including a formula)

- Salt remote commands

- Bonus point: Java part for product identification, and monitoring enablement

- Bonus point: sumaform enablement (https://github.com/uyuni-project/sumaform)

- Bonus point: Documentation (https://github.com/uyuni-project/uyuni-docs)

- Bonus point: testsuite enablement (https://github.com/uyuni-project/uyuni/tree/master/testsuite)

If something is breaking: we can try to fix it, but the main idea is research how supported it is right now. Beyond that it's up to each project member how much to hack :-)

- If you don't have knowledge about some of the steps: ask the team

- If you still don't know what to do: switch to another distribution and keep testing.

This card is for EVERYONE, not just developers. Seriously! We had people from other teams helping that were not developers, and added support for Debian and new SUSE Linux Enterprise and openSUSE Leap versions :-)

In progress/done for Hack Week 25

Guide

We started writin a Guide: Adding a new client GNU Linux distribution to Uyuni at https://github.com/uyuni-project/uyuni/wiki/Guide:-Adding-a-new-client-GNU-Linux-distribution-to-Uyuni, to make things easier for everyone, specially those not too familiar wht Uyuni or not technical.

openSUSE Leap 16.0

The distribution will all love!

https://en.opensuse.org/openSUSE:Roadmap#DRAFTScheduleforLeap16.0

Curent Status We started last year, it's complete now for Hack Week 25! :-D

[W]Reposync (this will require using spacewalk-common-channels and adding channels to the .ini file) NOTE: Done, client tools for SLMicro6 are using as those for SLE16.0/openSUSE Leap 16.0 are not available yet[W]Onboarding (salt minion from UI, salt minion from bootstrap scritp, and salt-ssh minion) (this will probably require adding OS to the bootstrap repository creator)[W]Package management (install, remove, update...). Works, even reboot requirement detection

Set Uyuni to manage edge clusters at scale by RDiasMateus

Description

Prepare a Poc on how to use MLM to manage edge clusters. Those cluster are normally equal across each location, and we have a large number of them.

The goal is to produce a set of sets/best practices/scripts to help users manage this kind of setup.

Goals

step 1: Manual set-up

Goal: Have a running application in k3s and be able to update it using System Update Controler (SUC)

- Deploy Micro 6.2 machine

Deploy k3s - single node

- https://docs.k3s.io/quick-start

Build/find a simple web application (static page)

- Build/find a helmchart to deploy the application

Deploy the application on the k3s cluster

Install App updates through helm update

Install OS updates using MLM

step 2: Automate day 1

Goal: Trigger the application deployment and update from MLM

- Salt states For application (with static data)

- Deploy the application helmchart, if not present

- install app updates through helmchart parameters

- Link it to GIT

- Define how to link the state to the machines (based in some pillar data? Using configuration channels by importing the state? Naming convention?)

- Use git update to trigger helmchart app update

- Recurrent state applying configuration channel?

step 3: Multi-node cluster

Goal: Use SUC to update a multi-node cluster.

- Create a multi-node cluster

- Deploy application

- call the helm update/install only on control plane?

- Install App updates through helm update

- Prepare a SUC for OS update (k3s also? How?)

- https://github.com/rancher/system-upgrade-controller

- https://documentation.suse.com/cloudnative/k3s/latest/en/upgrades/automated.html

- Update/deploy the SUC?

- Update/deploy the SUC CRD with the update procedure

Uyuni Saltboot rework by oholecek

Description

When Uyuni switched over to the containerized proxies we had to abandon salt based saltboot infrastructure we had before. Uyuni already had integration with a Cobbler provisioning server and saltboot infra was re-implemented on top of this Cobbler integration.

What was not obvious from the start was that Cobbler, having all it's features, woefully slow when dealing with saltboot size environments. We did some improvements in performance, introduced transactions, and generally tried to make this setup usable. However the underlying slowness remained.

Goals

This project is not something trying to invent new things, it is just finally implementing saltboot infrastructure directly with the Uyuni server core.

Instead of generating grub and pxelinux configurations by Cobbler for all thousands of systems and branches, we will provide a GET access point to retrieve grub or pxelinux file during the boot:

/saltboot/group/grub/$fqdn and similar for systems /saltboot/system/grub/$mac

Next we adapt our tftpd translator to query these points when asked for default or mac based config.

Lastly similar thing needs to be done on our apache server when HTTP UEFI boot is used.

Resources

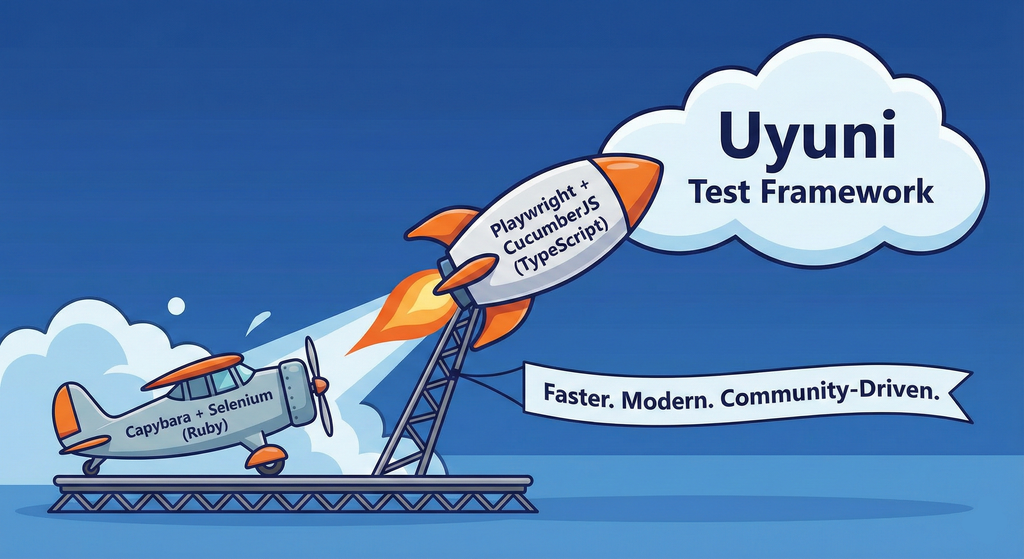

Move Uyuni Test Framework from Selenium to Playwright + AI by oscar-barrios

Description

This project aims to migrate the existing Uyuni Test Framework from Selenium to Playwright. The move will improve the stability, speed, and maintainability of our end-to-end tests by leveraging Playwright's modern features. We'll be rewriting the current Selenium code in Ruby to Playwright code in TypeScript, which includes updating the test framework runner, step definitions, and configurations. This is also necessary because we're moving from Cucumber Ruby to CucumberJS.

If you're still curious about the AI in the title, it was just a way to grab your attention. Thanks for your understanding.

Nah, let's be honest ![]() AI helped a lot to vibe code a good part of the Ruby methods of the Test framework, moving them to Typescript, along with the migration from Capybara to Playwright. I've been using "Cline" as plugin for WebStorm IDE, using Gemini API behind it.

AI helped a lot to vibe code a good part of the Ruby methods of the Test framework, moving them to Typescript, along with the migration from Capybara to Playwright. I've been using "Cline" as plugin for WebStorm IDE, using Gemini API behind it.

Goals

- Migrate Core tests including Onboarding of clients

- Improve test reliabillity: Measure and confirm a significant reduction of flakiness.

- Implement a robust framework: Establish a well-structured and reusable Playwright test framework using the CucumberJS

Resources

- Existing Uyuni Test Framework (Cucumber Ruby + Capybara + Selenium)

- My Template for CucumberJS + Playwright in TypeScript

- Started Hackweek Project

Enable more features in mcp-server-uyuni by j_renner

Description

I would like to contribute to mcp-server-uyuni, the MCP server for Uyuni / Multi-Linux Manager) exposing additional features as tools. There is lots of relevant features to be found throughout the API, for example:

- System operations and infos

- System groups

- Maintenance windows

- Ansible

- Reporting

- ...

At the end of the week I managed to enable basic system group operations:

- List all system groups visible to the user

- Create new system groups

- List systems assigned to a group

- Add and remove systems from groups

Goals

- Set up test environment locally with the MCP server and client + a recent MLM server [DONE]

- Identify features and use cases offering a benefit with limited effort required for enablement [DONE]

- Create a PR to the repo [DONE]

Resources

SUSE Edge Image Builder json schema by eminguez

Description

Current SUSE Edge Image Builder tool doesn't provide a json schema (yes, I know EIB uses yaml but it seems JSON Schema can be used to validate YAML documents yay!) that defines the configuration file syntax, values, etc.

Having a json schema will make integrations straightforward, as once the json schema is in place, it can be used as the interface for other tools to consume and generate EIB definition files (like TUI wizards, web UIs, etc.)

I'll make use of AI tools for this so I'd learn more about vibe coding, agents, etc.

Goals

- Learn about json schemas

- Try to implement something that can take the EIB source code and output an initial json schema definition

- Create a PR for EIB to be adopted

- Learn more about AI tools and how those can help on similar projects.

Resources

- json-schema.org

- suse-edge/edge-image-builder

- Any AI tool that can help me!

Result

Pull Request created! https://github.com/suse-edge/edge-image-builder/pull/821

I've extensively used gemini via the VScode "gemini code assist" plugin but I found it not too good... my workstation froze for minutes using it... I have a pretty beefy macbook pro M2 and AFAIK the model is being executed on the cloud... so I basically spent a few days fighting with it... Then I switched to antigravity and its agent mode... and it worked much better.

I've ended up learning a few things about "prompting", json schemas in general, some golang and AI in general :)

SUSE Edge Image Builder MCP by eminguez

Description

Based on my other hackweek project, SUSE Edge Image Builder's Json Schema I would like to build also a MCP to be able to generate EIB config files the AI way.

Realistically I don't think I'll be able to have something consumable at the end of this hackweek but at least I would like to start exploring MCPs, the difference between an API and MCP, etc.

Goals

- Familiarize myself with MCPs

- Unrealistic: Have an MCP that can generate an EIB config file

Resources

Result

https://github.com/e-minguez/eib-mcp

I've extensively used antigravity and its agent mode to code this. This heavily uses https://hackweek.opensuse.org/25/projects/suse-edge-image-builder-json-schema for the MCP to be built.

I've ended up learning a lot of things about "prompting", json schemas in general, some golang, MCPs and AI in general :)

Example:

Generate an Edge Image Builder configuration for an ISO image based on slmicro-6.2.iso, targeting x86_64 architecture. The output name should be 'my-edge-image' and it should install to /dev/sda. It should deploy

a 3 nodes kubernetes cluster with nodes names "node1", "node2" and "node3" as:

* hostname: node1, IP: 1.1.1.1, role: initializer

* hostname: node2, IP: 1.1.1.2, role: agent

* hostname: node3, IP: 1.1.1.3, role: agent

The kubernetes version should be k3s 1.33.4-k3s1 and it should deploy a cert-manager helm chart (the latest one available according to https://cert-manager.io/docs/installation/helm/). It should create a user

called "suse" with password "suse" and set ntp to "foo.ntp.org". The VIP address for the API should be 1.2.3.4

Generates:

``` apiVersion: "1.0" image: arch: x86_64 baseImage: slmicro-6.2.iso imageType: iso outputImageName: my-edge-image kubernetes: helm: charts: - name: cert-manager repositoryName: jetstack

Set Uyuni to manage edge clusters at scale by RDiasMateus

Description

Prepare a Poc on how to use MLM to manage edge clusters. Those cluster are normally equal across each location, and we have a large number of them.

The goal is to produce a set of sets/best practices/scripts to help users manage this kind of setup.

Goals

step 1: Manual set-up

Goal: Have a running application in k3s and be able to update it using System Update Controler (SUC)

- Deploy Micro 6.2 machine

Deploy k3s - single node

- https://docs.k3s.io/quick-start

Build/find a simple web application (static page)

- Build/find a helmchart to deploy the application

Deploy the application on the k3s cluster

Install App updates through helm update

Install OS updates using MLM

step 2: Automate day 1

Goal: Trigger the application deployment and update from MLM

- Salt states For application (with static data)

- Deploy the application helmchart, if not present

- install app updates through helmchart parameters

- Link it to GIT

- Define how to link the state to the machines (based in some pillar data? Using configuration channels by importing the state? Naming convention?)

- Use git update to trigger helmchart app update

- Recurrent state applying configuration channel?

step 3: Multi-node cluster

Goal: Use SUC to update a multi-node cluster.

- Create a multi-node cluster

- Deploy application

- call the helm update/install only on control plane?

- Install App updates through helm update

- Prepare a SUC for OS update (k3s also? How?)

- https://github.com/rancher/system-upgrade-controller

- https://documentation.suse.com/cloudnative/k3s/latest/en/upgrades/automated.html

- Update/deploy the SUC?

- Update/deploy the SUC CRD with the update procedure